GCP – Looking ahead as GKE, the original managed Kubernetes, turns 5

It’s hard to believe that GKE is already celebrating its 5th birthday. Over these last five years it’s been inspiring to see what businesses have accomplished with Google Cloud and GKE—from powering multi-million QPS retail services, to helping a game publisher deploy 1700 times to production in the week of its launch, to accelerating research into discovery of treatments for both rare and common conditions in cardiology and immunology, to helping map the human brain. These were all made possible by Kubernetes.

Today, as Virtual KubeCon kicks off, we want to say first and foremost, thank you to the community for making Kubernetes what it is, and making it the industry standard for managing containerized applications. While GKE transformed how businesses modernize their applications and pushed the bounds of what Kubernetes can achieve, such sustained innovation was only possible thanks to support and feedback from Kubernetes users and close partnership with GKE customers.

As we look ahead, we wanted to share five ways we’re continuing our work to make GKE the best place to run Kubernetes.

1. Leaving no app behind

Every workload deserves the benefits of portability, isolation, and security. The next wave of Kubernetes users wants to containerize their apps and derive those benefits, but often stumbles when confronted with the complexity of getting started with Kubernetes. Embracing Kubernetes shouldn’t have to be the hard way, and GKE has invested heavily in simplifying the entire journey—from creating your first cluster, to deploying your first app into production. GKE customers can now take advantage of Windows on GKE, as well as Google Cloud optimizations and best practices, to smoothly run workloads from traditional stateless to complex stateful and batch. For enterprise applications, you have access to practical guidance for PCI DSS on GKE and infrastructure-level compliance resources, helping you achieve compliance while reaping the benefits of running your applications on GKE. Even workloads previously stuck on proprietary legacy mainframes can now be migrated into GKE, using automated code refactoring tools. You can also supercharge your advanced AI/ML workloads at an optimized cost performance ratio using GPU and TPU support, the latter of which is only available in Google Cloud. If you’re interested in deploying GPU workloads across cloud, on-premises, and the edge, you can check out our partner NVIDIA’s GPU Operator for Anthos and GKE on-prem, which we are launching this week.

2. Saving money with optimal price-to-performance by default

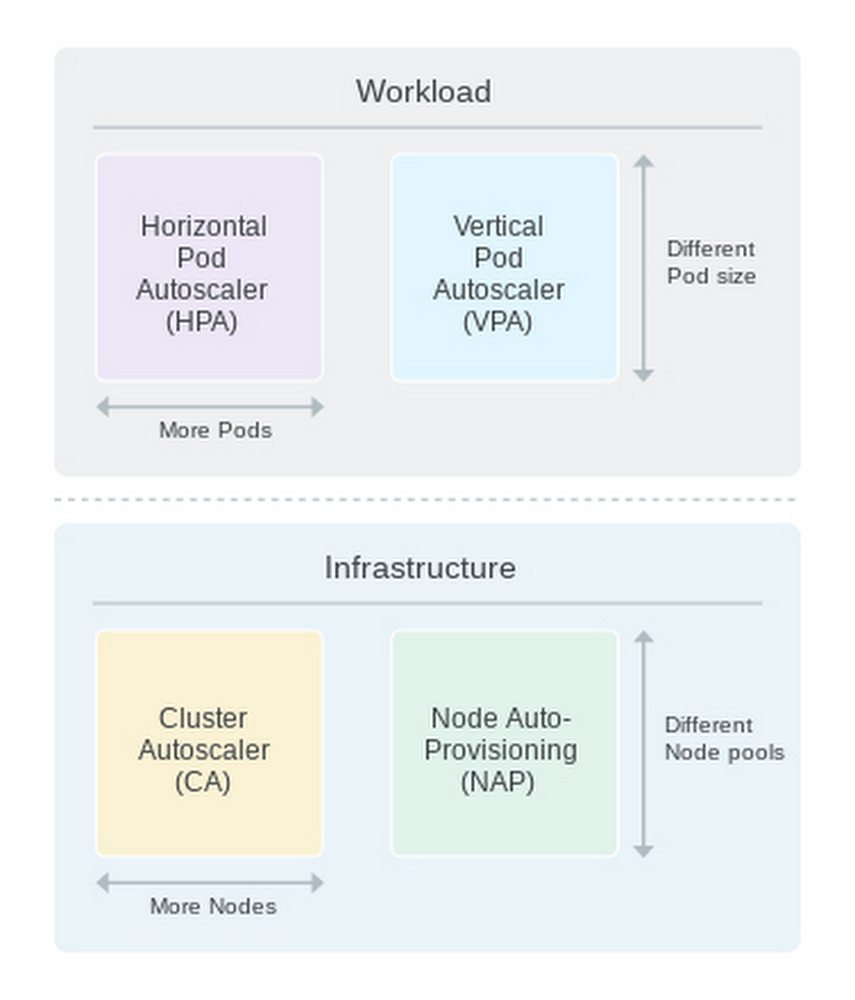

Embracing Kubernetes isn’t just about developer velocity but also about cost optimization. GKE helps organizations improve resource utilization through efficient bin packing and auto-scaling. Although this provides some operational efficiency, you can achieve considerable additional savings using multi-dimensional auto-scaling, which is again, only available on GKE.

GKE clusters of all sizes use cluster autoscaling, which can help reduce costs over static clusters and reduce the complexity of ensuring Kubernetes scales to meet the needs of your business. You can save even more with flexible options for horizontal pod autoscaling based on both CPU utilization and custom metrics, as well as vertical pod autoscaling and node auto-provisioning. We’ve published our best practices for running cost-optimized Kubernetes applications on GKE to help you get started.

3. Container-native networking: no more square pegs in round holes

GKE is at the forefront of container-native networking. A new eBPF-based dataplane in GKE provides built-in Kubernetes network policy enforcement to support multi-tenant workloads. It helps increase visibility into network traffic augmented with Kubernetes metadata for security conscious enterprises. VPC native integration affords IP management features such as flexible pod CIDR and non-RFC 1918 IP range support, letting you better manage your IP space and scale your clusters as needed. With network endpoint groups (NEGs) you can load balance HTTP traffic directly to pods for more stable and efficient networking. You can read more about our networking capabilities in this best practices blog post.

4. Bringing BeyondProd to containerized apps

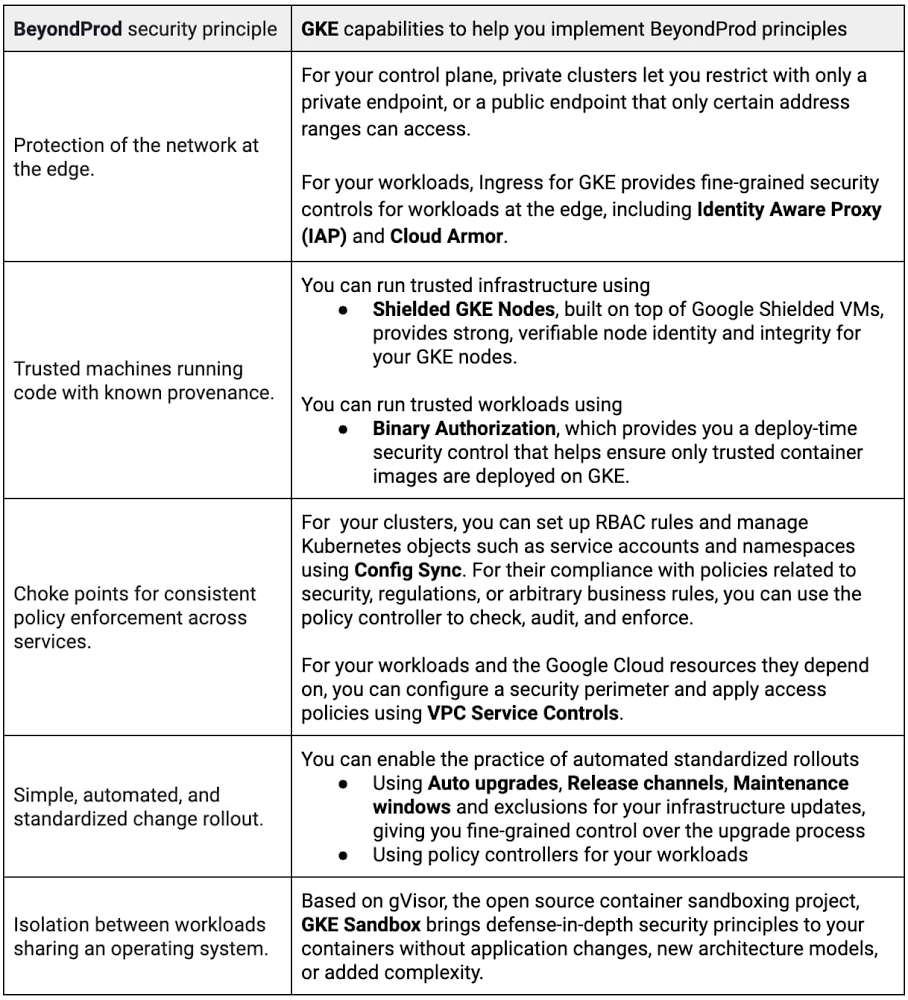

Google protects its own microservices with an initiative called BeyondProd, a new approach to cloud-native security. This protection applies concepts like: mutually authenticated service endpoints, transport security, edge termination with global load balancing, denial of service protection, end-to-end code provenance, and runtime sandboxing. By implementing the BeyondProd methodology for your containerized applications, GKE allows your developers to spend less time worrying about security while still achieving more secure outcomes.

5. Democratizing access to learning Kubernetes

Kubernetes, conceived and created at Google, is the industry standard for adopting containers and implementing cloud-native applications. Google is the largest engineering contributor1 to the Kubernetes project, contributing to almost every subsystem, SIG, and work-group. Google also funds and provides almost all of the infrastructure for Kubernetes development. We are deeply committed to continuing these contributions.

The growth and potential of Kubernetes is accelerating its usage across customers and creating more businesses focused on its distribution, hosting and services. To wit: there are more than 64,500 job openings related to Kubernetes2. To support this growing demand, we are continuing to provide opportunities to learn Kubernetes through GKE. You already have access to quickstarts, how-to guides, tutorials, and certifications from Coursera and Pluralsight. To make it even easier, from now until December 31, 2020 we’re providing Kubernetes training at no charge–visit goo.gle/gketurns5 to learn more.

We can’t wait to see what customers will achieve with GKE in the next five years. Until then, we will leave you with this celebratory ‘5 things developers love about GKE’ video.

1. DevStats from CNCF.io

2. LinkedIn job search results for ‘Kubernetes’ keyword worldwide as of August 12, 2020.

Read More for the details.