GCP – Compute Engine explained: Choosing the right machine family and type

Editor’s note: This is the first in a multi-part series to help you get the most out of your Compute Engine VMs.

Have you ever wondered whether you’re using the best possible cloud compute resource for your workloads? In this post, we discuss the different Compute Engine machine families in detail and provide guidance on what factors to consider when choosing your Compute Engine machine family. Whether you’re new to cloud computing, or just getting started on Google Cloud, these recommendations can help you optimize your Compute Engine usage.

For organizations that want to run virtual machines (VMs) in Google Cloud, Compute Engine offers multiple machine families to choose from, each suited for specific workloads and applications. Within every machine family there is a set of machine types that offer a prescribed combination of processor and memory configuration.

-

General purpose – These machines balance price and performance and are suitable for most workloads including databases, development and testing environments, web applications, and mobile gaming.

-

Compute-optimized – These machines provide the highest performance per core on Compute Engine and are optimized for compute-intensive workloads, such as high performance computing (HPC), game servers, and latency-sensitive API serving.

-

Memory-optimized – These machines offer the highest memory configurations across our VM families with up to 12 TB for a single instance. They are well-suited for memory-intensive workloads such as large in-memory databases like SAP HANA and in-memory data analytics workloads.

-

Accelerator-optimized – These machines are based on the NVIDIA Ampere A100 Tensor Core GPU. With up to 16 GPUs in a single VM, these machines are suitable for demanding workloads like CUDA-enabled machine learning (ML) training and inference, and HPC.

General purpose family

These machines provide a good balance of price and performance, and are suitable for a wide variety of common workloads. You can choose from four general purpose machine types:

-

E2 offers the lowest total cost of ownership (TCO) on Google Cloud with up to 31% savings compared to the first generation N1. E2 VMs run on a variety of CPU platforms (across Intel and AMD), and offer up to 32 vCPUs and 128GB of memory per node. E2 machine types also leverage dynamic resource management, which offers many economic benefits for workloads that prioritize cost savings.

-

N2 introduced the 2nd Generation Intel Xeon Scalable Processors (Cascade Lake) to Compute Engine’s general purpose family. Compared with first-generation N1 machines, N2s offer a greater than 20% price-performance improvement for many workloads and support up to 25% more memory per vCPU.

-

N2D VMs are built on the latest 2nd Gen AMD EPYC (Rome) CPUs, and support the highest core count and memory of any general-purpose Compute Engine VM. N2D VMs are designed to provide you with the same features as N2 VMs including local SSD, custom machine types, and transparent maintenance through live migration.

-

N1s are first-generation general purpose VMs and offer up to 96 vCPUs and 624GB of memory . For most use cases we recommend choosing one of the second-generation general purpose machine types above. For GPU workloads, N1 supports a variety of NVIDIA GPUs (see this table for details on specific GPUs supported in each zone).

For flexibility, general purpose machines come as predefined (with a preset number of vCPUs and memory), or can be configured as custom machine types. Custom machine types allow you to independently configure CPU and memory to find the right balance for your application, so you only pay for what you need.

Let’s take a closer look at the general purpose machine family:

E2 machine types

E2 VMs utilize dynamic resource management technologies developed for Google’s own services that make better use of hardware resources, driving down costs and passing the savings on to you. If you have workloads such as web serving, small-to-medium databases, and application development and testing environments that run well on N1 but don’t require large instance sizes, GPUs or local SSD, consider moving them to E2.

Whether comparing on-demand usage TCO or leveraging committed use discounts, E2 VMs offer up to 31% improvement in price-performance as illustrated below, across a range of benchmarks. E2 pricing already includes sustained use discounts and E2s are also eligible for committed use discounts, bringing additional savings of up to 55% for three-year commitments.

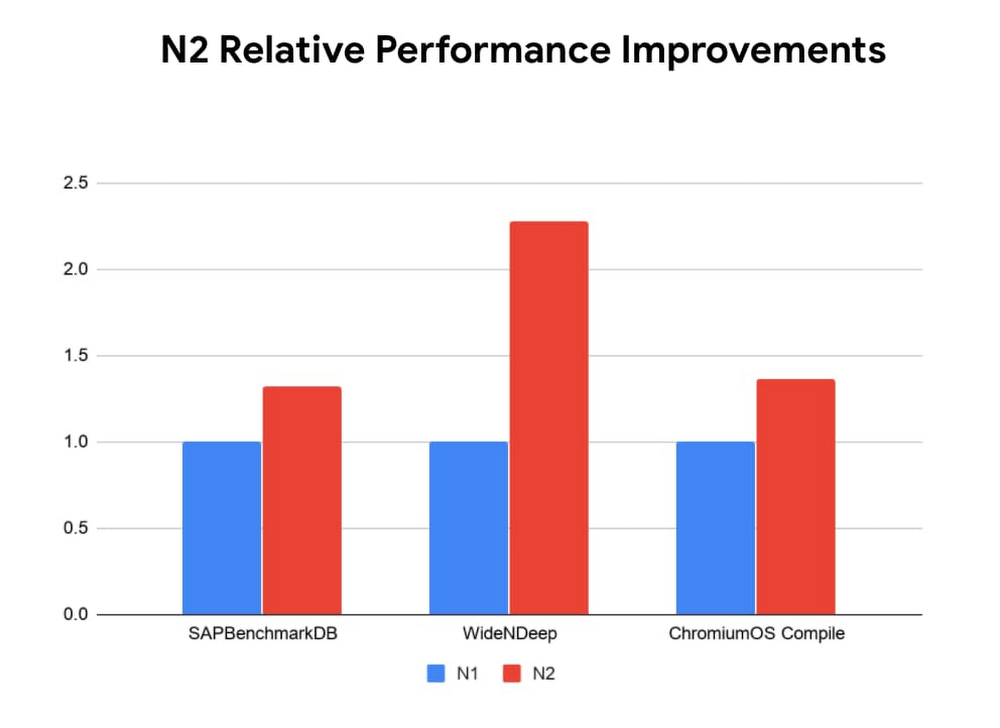

N2 machine types

N2 machines run at 2.8GHz base frequency, and 3.4GHz sustained all-core turbo, and offer up to 80 vCPUs and 640GB of memory. This makes them a great fit for many general purpose workloads that can benefit from increased per core performance, including web and application servers, enterprise applications, gaming servers, content and collaboration systems, and most databases.

Whether you are running a business critical database or an interactive web application, N2 VMs offer you the ability to get ~30% higher performance from your VMs, and shorten many of your computing processes, as illustrated through a wide variety of benchmarks. Additionally, with double the FLOPS per clock cycle compared to previous-generation Intel Advanced Vector Extensions 2 (Intel AVX2), Intel AVX-512 boosts performance and throughput for the most demanding computational tasks.

N2 instances perform 2.82x faster than N1 instances on AI inference of a Wide & Deep model using Intel-optimized Tensorflow, leveraging new Deep Learning ( DL) Boost instructions in 2nd Generation Xeon Scalable Processors. The new DL Boost instructions extend the Intel AVX-512 instruction set to do with one instruction which took three instructions in previous generation processors.

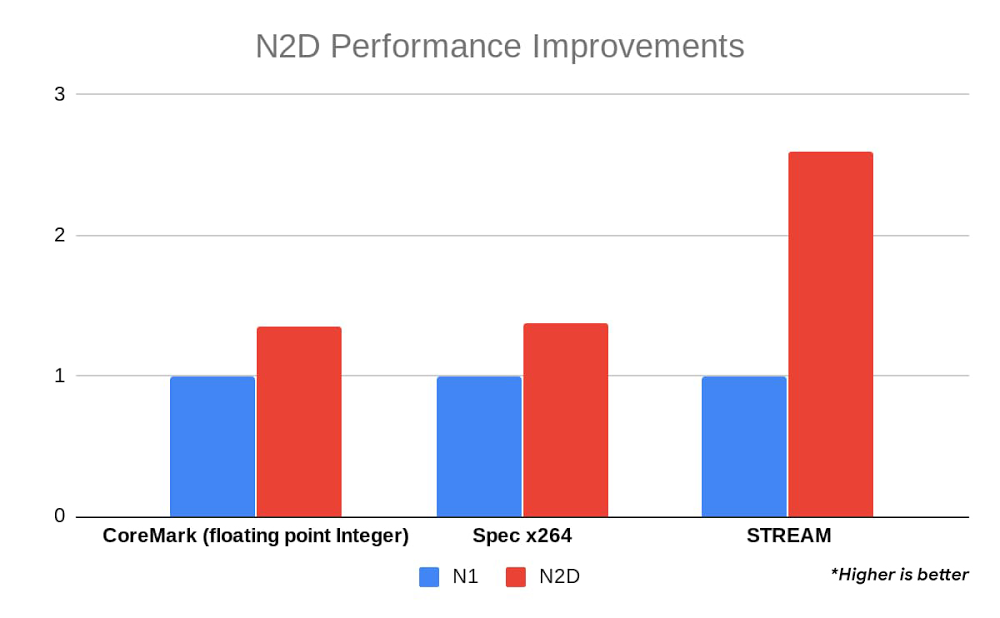

N2D machine types

N2D VMs provide performance improvements for data management workloads that leverage AMD’s higher memory bandwidth and higher per-system throughput (available with larger VM choices), with up to 224 vCPUs, making them the largest general purpose VM on Google Compute Engine. N2D VMs offer savings of up to 13% over comparable N-series instances.

N2D machine types are suitable for web applications, databases, workloads, and video streaming. N2D VMs can also offer a performance improvement for many high-performance computing workloads that would benefit from higher memory bandwidth.

The benchmark below illustrates a 20-30% performance increase across many workload types with up to 2.5X improvements for benchmarks that benefit from N2D’s improved memory bandwidth, like STREAM, making them a great fit for memory bandwidth-hungry applications.

N2 and N2D VMs offer up to 20% sustained use discounts and are also eligible for committed use discounts, bringing additional savings of up to 55% for three-year commitments.

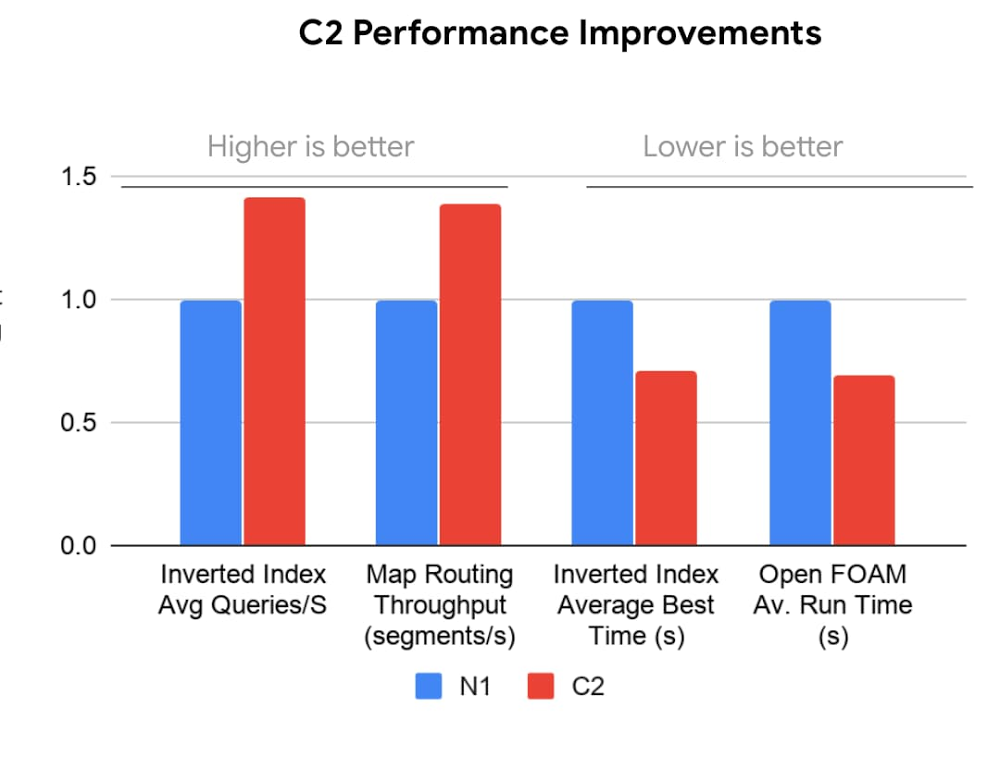

Compute-optimized (C2) family

Compute-optimized machines focus on the highest performance per core and the most consistent performance to support real-time applications performance needs. Based on 2nd Generation Intel Xeon Scalable Processors (Cascade Lake), and offering up to 3.8 GHz sustained all-core turbo, these VMs are optimized for compute-intensive workloads such as HPC, gaming (AAA game servers), and high-performance web serving.

Compute-optimized machines produce a greater than 40% performance improvement compared to the previous generation N1 and offer higher performance per thread and isolation for latency-sensitive workloads. Compute-optimized VMs come in different shapes ranging from 4 to 60 vCPUs, and offer up to 240 GB of memory. You can choose to attach up to 3TB of local storage to these VMs for applications that require higher storage performance.

As illustrated below, compute-optimized VMs demonstrate up to 40% performance improvements for most interactive applications, whether you are optimizing for the number of queries per second or the throughput of your map routing algorithms. For many HPC applications, benchmarks such as OpenFOAM indicate that you can see up to 4X reduction in your average runtime.

C2 VMs offer up to 20% sustained use discounts and are also eligible for committed use discounts, bringing additional savings of up to 60% for three-year commitments.

Memory-optimized (M1, M2) family

Memory-optimized machine types offer the highest memory in our VM family. With VMs that range in size from 1TB to 12TBs of memory, and offer up to 416 vCPUs, these VMs offer the most compute and memory resources of any Compute Engine VM offering. They are well suited for large in-memory databases such as SAP HANA, as well as in-memory data analytics workloads. M1 VMs offer up to 4TB of memory, while M2 VMs support up to 12TB of memory.

M1 and M2 VM types also offer the lowest cost per GB of memory on Compute Engine, making them a great choice for workloads that utilize higher memory configurations with low compute resources requirements. For workloads such as Microsoft SQL Server and similar databases, these VMs allow you to provision only the compute resources you need as you leverage larger memory configurations.

With the addition of 6TB and 12TB VMs to Compute Engine’s memory-optimized machine types (M2), SAP customers can now run their largest SAP HANA databases on Google Cloud. These VMs are the largest SAP certified VMs available from a public cloud provider.

Not only do M2 machine types accommodate the most demanding and business critical database applications, they also support your favorite Google Cloud features. For these business critical databases, uptime is critical for business continuity. With live migration, you can keep your systems up and running even in the face of infrastructure maintenance, upgrades, security patches, and more. And Google Cloud’s flexible committed use discounts let you migrate your growing database from a 1TB-4TB instance to the new 6TB VM while leveraging your current memory-optimized commitments.

M1 and M2 VMs offer up to 30% sustained use discounts and are also eligible for committed use discounts, bringing additional savings of up to >60% for three-year commitments.

Accelerator-optimized (A2) family

The accelerator-optimized family is thelatest addition to the Compute Engine portfolio. A2s are currently available via our alpha program, with public availability expected later this year. The A2 is based on the latest NVIDIA Ampere A100 GPU and was designed to meet today’s most demanding applications such as machine learning and HPC. A2 VMs were the first NVIDIA Ampere A100 Tensor Core GPU-based offering on a public cloud.

Each A100 GPU offers up to 20x the compute performance compared to the previous generation GPU and comes with 40GB of high-performance HBM2 GPU memory. The A2 uses NVIDIA’s HGX system to offer high-speed NVLink GPU-to-GPU bandwidth up to 600 GB/s. A2 machines come with up to 96 Intel Cascade Lake vCPUs, optional Local SSD for workloads requiring faster data feeds into the GPUs, and up to 100Gbps of networking. A2 VMs also provide full vNUMA transparency into the architecture of underlying GPU server platforms, enabling advanced performance tuning.

For very demanding compute workloads, the A2 has the a2-megagpu-16g machine type, which comes with 16 A100 GPUs, offering a total of 640GB of GPU memory and providing up to 10 petaflops of FP16 or 20 petaOps of int8 CUDA compute power in a single VM, when using the new sparsity feature.

Getting the best out of your compute

Choosing the right VM family is the first step in driving efficiency for your workloads. In the coming weeks, we’ll share other helpful information including an overview of our intelligent compute offerings, OS troubleshooting and optimization, licensing, and data protection, to help you optimize your Compute Engine resources. In addition, be sure to read our recent post on how to save on Compute Engine. To learn more about Compute Engine, visit our documentation pages.

Read More for the details.