GCP – Efficiently serve optimized AI models with NVIDIA NIM microservices on GKE

In the rapidly evolving landscape of AI, efficiently serving AI models is critical to ensure the platform delivers value at optimal performance and cost. But the complexities of optimizing and operating an increasing variety of AI models prevents many organizations from fully realizing AI’s value. We’ve been partnering closely with NVIDIA to bring the power of the NVIDIA AI accelerated computing platform to Google Kubernetes Engine (GKE) to address these complexities. Today, we’re thrilled to announce the availability of NVIDIA NIM, part of the NVIDIA AI Enterprise software platform, on GKE, letting you deploy NIM microservices directly from the GKE console.

NVIDIA NIM containerized microservices for accelerated computing optimize deployment for common AI models that can run across various environments, including Kubernetes clusters, with a single command, providing standard APIs for seamless integration into generative AI applications and workflows.

The combination of NVIDIA NIM and GKE unlocks new potential for AI model inference, helping organizations to deliver optimal latency and throughput with the scale and operational efficiency of GKE. And deploying these powerful capabilities on GKE is easier than ever. With NVIDIA NIM microservices available directly in the GKE console, you can deploy the latest NIM-optimized models including meta/llama-3.1-70b-instruct, mistralai/mixtral-8x7b-instruct-v0.1 and nvidia/nv-embedqa-e5-v5 on GKE with just a few clicks. This deployment experience expands upon the previously available helm-based deployment and enables customers to seamlessly deploy the latest NIM models from the NVIDIA API catalog on NVIDIA GPUs orchestrated by GKE, and integrated high-performance storage for model weights.

Writer is transforming work with enterprise-grade AI models optimized for NVIDIA NIM and delivered on GKE:

“Writer is excited to expand our partnership with Google Cloud and NVIDIA to enable us to deliver Writer’s advanced AI models in a highly performant, scalable and efficient way. Together, NVIDIA NIMs and GKE provide outstanding inference performance, making it easy to integrate and scale across different applications. This collaboration improves our deployment abilities and uses advanced technology to ensure top performance and reliability.” – Waseem Alshikh, CTO, Writer, Inc.

The ability to deploy NVIDIA NIM microservices directly to GKE marks an important milestone in Google Cloud’s partnership with NVIDIA.

“With NVIDIA NIM microservices integrated as ready to deploy solution in Google Kubernetes Engine, organizations can bring AI to market faster with models optimized for NVIDIA GPUs that can be efficiently scaled and operated with GKE,” said Abhishek Sawarkar, product manager for NVIDIA AI Enterprise. “We’re seeing significant latency and throughput improvements on popular GenAI models, which can be deployed and scaled on GKE’s production-ready platform in minutes rather than hours.”

Get started with NVIDIA NIM on GKE

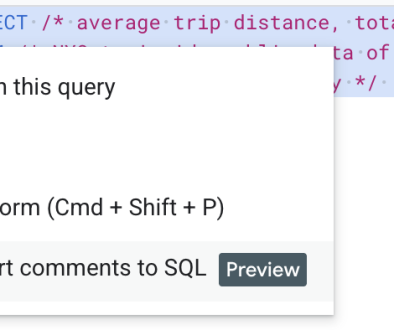

Navigate to the Google Kubernetes Engine in the Google Cloud console and select NVIDIA NIM, then launch it to configure your deployment.

In the UI, specify the deployment name, service account information and confirm APIs are enabled. Specify a unique cluster name and GPU type and shape for the cluster. Select your model from the drop-down and click Deploy. The deployment will create a new GKE cluster and deploy the specified NIM.

After deployment has successfully completed, connect to your NIM endpoint with the following commands, where $CLUSTER is the GKE cluster name, $DEPLOYMENT is the deployment name and $PROJECT is the GCP project in which it was deployed.

<ListValue: [StructValue([(‘code’, ‘gcloud container clusters get-credentials $CLUSTER –region $REGION –project $PROJECT’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e5455decca0>)])]>

<ListValue: [StructValue([(‘code’, ‘kubectl -n nim port-forward service/my-nim-nim-llm 8000:8000’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e5455deca60>)])]>

Send a test inference to your NIM endpoints with a curl command, specifying the model previously selected (this example shows how to query a llama-3.1-8b-instruct model)

<ListValue: [StructValue([(‘code’, ‘curl -X ‘POST’ \rn ‘http://localhost:8000/v1/chat/completions’ \rn -H ‘accept: application/json’ \rn -H ‘Content-Type: application/json’ \rn -d ‘{rn “messages”: [rn {rn “content”: “You are a polite and respectful chatbot helping people plan a vacation.”,rn “role”: “system”rn },rn {rn “content”: “What should I do for a 4 day vacation in Spain?”,rn “role”: “user”rn }rn ],rn “model”: “meta/llama-3.1-8b-instruct”,rn “max_tokens”: 4096,rn “top_p”: 1,rn “n”: 1,rn “stream”: false,rn “stop”: “\n”,rn “frequency_penalty”: 0.0rn}”), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e5455dec970>)])]>

And that’s it! Now you know how to deploy an NVIDIA NIM microservice to GKE directly from the console, making it easy to enjoy the power of NVIDIA GPUs and software on Google Cloud’s high-performance, reliable containerized infrastructure. Learn more about the Google Cloud and NVIDIA partnership at cloud.google.com/NVIDIA.

Read More for the details.