GCP – Build supercharged gen AI applications with LangChain and Google Cloud databases

Generative AI is empowering developers — even those without experience in machine learning — to build transformative AI applications. In order to get started they need to integrate large language models (LLMs) and other foundation models with operational databases and craft prompts to pull relevant information from various data sources, including their existing enterprise systems.

Role of databases in gen AI

Developers are finding that large language models on their own, are insufficient for building high quality and non-hallucinating enterprise Gen AI apps. Operational databases bridge that gap between LLMs and enterprise gen AI apps by grounding the LLMs and the applications in the actual enterprise data. This is an implementation of a Gen AI technique called Retrieval Augmented Generation (RAG), which many applications are adopting. This allows them to incorporate external knowledge from databases for more accurate, domain-specific, and up-to-date results. Vector-enabled databases offer semantic search without compromising security and readiness while also being easy to use and requiring no data movement.

Streamlining RAG workflows with LangChain and Google Cloud databases

To provide application developers with tools to help them quickly and more efficiently build RAG applications, we built a deeper integration with LangChain, a popular open-source LLM orchestration framework. Last year we shared reference patterns for leveraging Vertex AI embeddings, foundation models and vector search capabilities with LangChain to build generative AI applications. Developers now have access to a suite of LangChain packages for leveraging Google Cloud’s database portfolio for additional flexibility and customization to drive the most value from their private data.

Each package will have up to three LangChain integrations:

Document loader for loading and storing information from documents,

Vector stores to enable semantic search for our databases that support Vectors

Chat Messages Memory to enable chains to recall previous conversations

This enables developers the flexibility to build complex workflows, allowing them to easily swap out underlying components (such as a vector database) as needed depending on their specific use cases. Examples include personalized product recommendations, question answering, document search and synthesis, customer service automation, and more.

The LangChain Vector stores integration is available for Google Cloud databases with vector support, including AlloyDB, Cloud SQL for PostgreSQL, Memorystore for Redis, and Spanner.

The Document loaders and Memory integrations are available for all Google Cloud databases including AlloyDB, Cloud SQL for MySQL, PostgreSQL and SQL Server, Firestore, Bigtable, Memorystore for Redis, El Carro for Oracle databases, and Spanner.

This table lists the packages that are now available.

Python Packages

Google Cloud Database

GitHub repo

Documentation

LangChain repo

Cloud SQL PostgreSQL

langchain-google-cloud-sql-pg-python

Cloud SQL for MySQL

(no Vector Store)

langchain-google-cloud-sql-mysql-python

Cloud SQL for SQL Server

(no Vector Store)

langchain-google-cloud-sql-mssql-python

AlloyDB for PostgreSQL

langchain-google-alloydb-pg-python

Spanner

langchain-google-spanner-python

Bigtable

(no Vector Store)

langchain-google-bigtable-python

Memorystore for Redis

langchain-google-memorystore-redis-python

Firestore (in Native mode)

langchain-google-firestore-python

Firestore (in Datastore mode)

langchain-google-datastore-python

El Carro for Oracle Databases

LangChain’s integration with Google Cloud databases provides access to accurate and reliable information stored in an organization’s databases, enhancing the credibility and trustworthiness of LLM responses. Additionally, it enables enhanced contextual understanding, by pulling in contextual information from databases resulting in highly relevant and personalized responses tailored to user needs. This provides:

Accelerated development: LangChain encodes best practices for RAG applications, allowing development teams to get started quickly;

Interoperability: LangChain provides standard interfaces for various components needed to build RAG applications, giving developers the flexibility and ease to swap between different components;

Observability: Given that LLMs are a central component of RAG applications, there is an inherently large amount of non-determinism. This makes observability more important than ever. Developers have access to LangSmith which is a built-in observability platform.

New era of generative AI application development

Collaboration with LangChain supports our long-term commitment to be an open, integrated, and innovative database platform. With LangChain’s broad reach (over 5 million downloads monthly), this integration introduces a new era of AI development, enabling application developers to build cutting-edge generative AI applications that are not only intelligent, but also knowledge-driven and firmly grounded in reality.

In addition to the above offerings, Vertex AI also provides a fully managed, purpose built Search engine for RAG applications. Please see this link for details.

Getting started

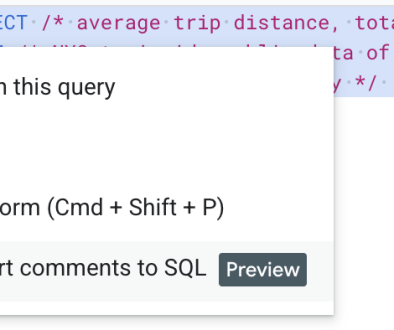

If you’re interested in getting started with our LangChain Integrations, consider trying out the sample quickstart application as a starting point. In the associated notebook, we provide a detailed explanation of how to use the LangChain Document loader, Vector store, and Chat Messages Memory integrations. For step by step guidance, you can also check out the following Codelabs:

Cloud SQL for PostgreSQL Github Link, Colab

Memorystore Github Link, Colab

AlloyDB for PostgreSQL Github Link, Colab

Spanner Github Link Colab

To hear more about these capabilities, join our live Data Cloud Innovation Live webcast on March 7, 2024, 9:00 AM – 10:00 AM PST, to hear from product engineering about the latest innovations across AlloyDB for PostgreSQL, Spanner, BigQuery, and Looker.

Read More for the details.