GCP – Announcing Mistral AI’s Large-Instruct-2411 on Vertex AI

In July, we announced the availability of Mistral AI’s models on Vertex AI: Codestral for code generation tasks, Mistral Large 2 for high-complexity tasks, and the lightweight Mistral Nemo for reasoning tasks like creative writing. Today, we’re announcing the availability of Mistral AI’s newest model on Vertex AI Model Garden: Mistral-Large-Instruct-2411 is now generally available

Large-Instruct-2411 is an advanced dense large language model (LLM) of 123B parameters with strong reasoning, knowledge and coding capabilities extending its predecessor with better long context, function calling and system prompt. The model is ideal for use cases that include complex agentic workflows with precise instruction following and JSON outputs, or large context applications requiring strong adherence for retrieval-augmented generation (RAG), and code generation.

You can access and deploy the new Mistral AI Large-Instruct-2411 model on Vertex AI through our Model-as-a-Service (MaaS) or self-service offering today.

- aside_block

- <ListValue: [StructValue([(‘title’, ‘$300 in free credit to try Google Cloud AI and ML’), (‘body’, <wagtail.rich_text.RichText object at 0x3ea040334070>), (‘btn_text’, ‘Start building for free’), (‘href’, ‘http://console.cloud.google.com/freetrial?redirectPath=/vertex-ai/’), (‘image’, None)])]>

What can you do with the new Mistral AI models on Vertex AI?

By building with Mistral’s models on Vertex AI, you can:

- Select the best model for your use case: Choose from a range of Mistral AI models, including efficient options for low-latency needs and powerful models for complex tasks like agentic workflows. Vertex AI makes it easy to evaluate and select the optimal model.

- Experiment with confidence: Mistral AI models are available as fully managed Model-as-a-Service on Vertex AI. You can explore Mistral AI models through simple API calls and comprehensive side-by-side evaluations within our intuitive environment.

- Manage models without overhead: Simplify how you deploy the new Mistral AI models at scale with fully managed infrastructure designed for AI workloads and the flexibility of pay-as-you-go pricing.

- Tune the models to your needs: In the coming weeks, you will be able to fine-tune Mistral AI’s models to create bespoke solutions, with your unique data and domain knowledge.

- Craft intelligent agents: Create and orchestrate agents powered by Mistral AI models, using Vertex AI’s comprehensive set of tools, including LangChain on Vertex AI. Integrate Mistral AI models into your production-ready AI experiences with Genkit’s Vertex AI plugin.

- Build with enterprise-grade security and compliance: Leverage Google Cloud’s built-in security, privacy, and compliance measures. Enterprise controls, such as Vertex AI Model Garden’s new organization policy, provide the right access controls to make sure only approved models can be accessed.

Get started with Mistral AI models on Google Cloud

These additions continue Google Cloud’s commitment to open and flexible AI ecosystems that help you build solutions best-suited to your needs. Our collaboration with Mistral AI is a testament to our open approach, within a unified and an enterprise ready environment. Vertex AI provides a curated collection of first-party, open-source, and third-party models, many of which — including the new Mistral AI models — can be delivered as a fully-managed Model-as-a-service (MaaS) offering — providing you with the simplicity of a single bill and enterprise-grade security on our fully-managed infrastructure.

To start building with Mistral’s newest models, visit Model Garden and select the Mistral Large model tile. The models are also available on Google Cloud Marketplace here: Mistral Large.

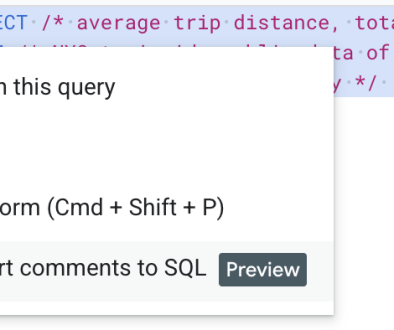

You can check out our sample code and documentation to help you get started.

Read More for the details.