GCP – Announcing LangChain on Vertex AI for AlloyDB and Cloud SQL for PostgreSQL

Among application developers, LangChain is one of the most popular open-source LLM orchestration frameworks. To help developers use LangChain to create context-aware gen AI applications with Google Cloud databases, in March we open-sourced LangChain integrations for all of our Google Cloud databases including Vector stores, Document loaders, and Chat message history. And now, we’re excited to announce our managed integration with LangChain on Vertex AI for AlloyDB and Cloud SQL for PostgreSQL.

Support for LangChain on Vertex AI lets developers build, deploy, query, and manage AI agents and reasoning frameworks in a secure, scalable, and reliable way. Application developers can leverage the LangChain open-source library to build and deploy custom gen AI applications that connect to Google Cloud resources such as databases and existing Vertex AI models.

With LangChain on Vertex AI, developers get access to:

A streamlined framework for swiftly building and deploying enterprise-grade AI agents

A ready-to-use LangChain agent template to kickstart development

A managed service to securely and scalably deploy, serve, and manage AI agents

A collection of easily deployable, end-to-end templates for different gen AI reference architectures leveraging Google Cloud databases such as AlloyDB and Cloud SQL

Specifically, LangChain on Vertex AI enables developers to deploy their applications to a Reasoning Engine managed runtime, a Vertex AI service that offers the advantages of Vertex AI integration, including security, privacy, observability, and scalability.

Unlocking new database use cases

Integrating Google Cloud databases with LangChain on Vertex AI unlocks a range of powerful use cases for organizations, including:

Querying databases: Ask the model to transform questions like “What percentage of orders are returned?” into SQL queries and create functions that submit these queries to AlloyDB, Cloud SQL for PostgreSQL, and others.

Knowledge retrieval: Using databases with vector support such as AlloyDB and Cloud SQL for PostgreSQL lets you semantically search unstructured data to provide models with context.

Chat bots: By creating functions that connect to databases and business APIs, the model can deliver accurate responses to queries such as “Do you have the Pixel 8 Pro in stock?” or “Can I visit a store in Mountain View, CA to try it out?”

Tool use: Developers can create a function that connects to various data sources/databases and APIs, for example currency exchange, Google Maps, weather, language translation, etc. This allows models to provide accurate answers to queries such as “What’s the weather like in Paris?” or “What’s the exchange rate for euros to dollars today?”

Turbocharging AI development at TM Forum

TM Forum, a telecommunications industry consortium, is an early user of LangChain on Vertex AI for their AI Virtual Assistant (TM Forum AIVA). Here is what Richard May, Vice President of Technology, Data & Digital Experience at TM Forum had to say about their experience:

“During a two-day hackathon, LangChain on Vertex AI, powered by the Reasoning Engine, played a crucial role in the success of a 20-person hackathon involving Deutsche Telekom, Jio, Telefonica, and Telenor.

A single TM Forum Innovation Hub Developer was able to integrate backend functions to search an extensive library of TM Forum assets and to generate code with just a few lines of code, deploy fully managed web services with just one call, and test their agentic workflows in just one week. The integration with Google Cloud IAM and API Gateway made meeting security and governance goals straightforward.

Using Reasoning Engine, hackathon participants showcased a wide range of solutions, ranging from enhancing customer satisfaction to enabling AIOps. They seamlessly plugged their data and business process flows into TM Forum AIVA, built with Reasoning Engine. The platform remained consistently available for multiple days under intensive use without any technical issues.

We are moving to productize the service based on strong demand from our member companies.”

Integrating with AlloyDB and Cloud SQL

For AlloyDB and Cloud SQL for PostgreSQL users, LangChain on Vertex AI delivers several benefits, namely:

Fast knowledge retrieval: Connecting LangChain on Vertex AI to an AlloyDB or Cloud SQL for PostgreSQL database with the existing AlloyDB and Cloud SQL LangChain packages makes it easier for developers to build knowledge-retrieval applications. Developers can use AlloyDB and Cloud SQL vector stores to store and semantically search unstructured data to provide models with additional context. The vector store integrations let you filter metadata effectively, provide flexibility in connecting to pre-existing vector embedding tables, and help improve performance with custom vector indexes.

Secure authentication and authorization: LangChain integrations to Google Cloud databases utilize best practices for creating database connection pools, authenticating, and authorizing access to database instances using the principle of least privilege.

Chat history context: Many gen AI applications rely on conversation history to create more intelligent applications with earlier context awareness, and can handle multi-turn follow-up questions. Developers can take advantage of these database integrations to easily store and retrieve chat message histories.

Fast prototyping: The readily available LangChain templates enable developers to quickly build and get to production faster with a single SDK call. They can explore and iterate on new ideas without being dependent on the release of a particular data connector for an external system or API, since they can directly connect AI agents to any API or data source that’s using the Reasoning Engine. Instead of managing the development process manually, the Reasoning Engine runtime generates a compliant API based on the library within minutes, with a single click.

Managed deployment: With Reasoning Engine, developers can leverage a fully managed service that uses Vertex AI’s infrastructure and prebuilt containers to deploy, productionize, and scale applications with a simple API call, quickly turning locally tested prototypes into enterprise-ready deployments. By handling autoscaling, regional expansion, and container vulnerabilities, the Vertex AI Reasoning Engine managed runtime frees developers from application server development, creating containers, or creating and and configuring authentication, IAM, and scaling processes.

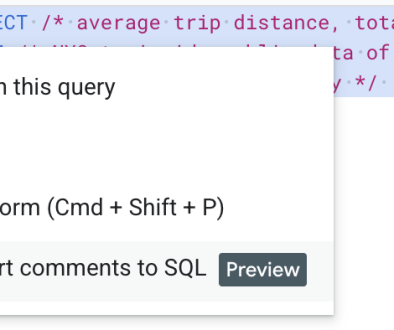

The table below shows a comparison of developer workflow steps with and without LangChain on Vertex AI for AlloyDB and Cloud SQL for PostgreSQL. You can see how this integration significantly simplifies many common tasks.

Without LangChain on Vertex AI for AlloyDB and Cloud SQL

With LangChain on Vertex AI for AlloyDB and Cloud SQL

IAM auth

Manually set up and manage database IAM authorization and user authentication

Leverage readily available best practices for connecting and authenticating to Google databases

Database table management and semantic search

Manually define and implement semantic vector similarity search as SQL queries

Define, create, and manage database tables for conversation history

Vector store integrations make it fast to implement custom similarity searches

Use built-in methods for constructing relational tables for vector stores and conversation history

Develop LangChain code

To build, deploy, and operate an application:

Develop an application server with Fastapi, Django, etc. so that the code (like Langchain, etc) can be served via HTTP API

Be expertised at Docker and build the code into a docker container locally

Provision CloudRun instances and configure authentication, IAM, scaling config, etc.

Expose the CloudRun Endpoint to users

Develop custom RAG chains and agents

Simply call reasoning_engine.create()

Leverage pre-built templates for RAG chains and agents

Infrastructure operation

User code is deployed to the user’s project

User takes care of the entire operational workload

User code is deployed to tenant project

LangChain on Vertex AI takes care of autoscaling, container vulnerabilities, etc.

Vertex AI ecosystem benefits

Manually add logging, monitoring, and tracing

Leverage built-in observability features such as Cloud Logging, Monitoring, and Tracing

Getting started

In short, the availability of AlloyDB and Cloud SQL for PostgreSQL LangChain integrations in Vertex AI opens up a wealth of new possibilities for building AI-based applications that use authoritative data from your operational databases. To get started, take a look at our Notebook-based tutorials:

Deploying a RAG Application with AlloyDB to LangChain for Vertex AI

Deploying a RAG Application with Cloud SQL for Postgres to LangChain for Vertex AI

In addition, check out the following templates that highlight advanced use cases such as building and deploying a question-answering RAG application and an Agent with a RAG tool and Memory:

Read More for the details.