GCP – 229 things we announced at Google Cloud Next 25 – a recap

Google Cloud Next 25 took place this week and we’re all still buzzing! It was a jam-packed week in Las Vegas complete with interactive experiences, including more than 10 keynotes and spotlights, 700 sessions, and 350+ sponsoring partners joining us for an incredible Expo show. Attendees enjoyed hands-on learning across AI innovation, data cloud, modern infrastructure, security, Google Workspace, and more.

At our opening keynote, we showcased cutting-edge product innovations across our AI-optimized platform and featured hundreds of customers and partners building with Google Cloud as well as five awesome demos. You can catch up on all the highlights in our 10-minute keynote recap.

Our developer keynote showed how AI is revolutionizing the developer workflow, and featured seven incredible demos on everything from building with Gemini to creating multi-agent systems.

Last year, we shared how customers were exploring the exciting potential of generative AI to transform the way they work. This year, we showcased how customers are getting real business value from Google AI, celebrating hundreds of customer stories across the event, including the amazing story of how The Sphere is using Google AI to enrich their fully immersive The Wizard of Oz experience.

It was a busy week, so we’ve prepared a summary of all the 228 announcements from Next ‘25 below:

AI and Multi-Agent Systems

Models: Building on Google DeepMind research, we announced the addition of a variety of first-party models, as well as new third-party models to Vertex AI Model Garden.

1. Gemini 2.5 Pro is available in public preview on Vertex AI, AI Studio, and in the Gemini app. Gemini 2.5 Pro is engineered for maximum quality and tackling the most complex tasks demanding deep reasoning and coding expertise. It is ranked #1 on Chatbot Arena.

2. Gemini 2.5 Flash — our low latency and most cost-efficient thinking model — is coming soon to Vertex AI, AI Studio, and in the Gemini app.

3. Imagen 3: Our highest quality text-to-image model now has improved image generation and inpainting capabilities for reconstructing missing or damaged portions of an image.

4. Chirp 3: Our audio generation and understanding model now includes Instant Custom Voice, a new way to create custom voices with just 10 seconds of audio input.

5. Lyria: The industry’s first enterprise-ready, text-to-music model, transforms simple text prompts into 30-second music clips.

6. Veo 2: Our advanced video generation model has new editing and camera control features to help customers refine and repurpose video content with precision.

(Check out our fun keynote countdown clock made with Veo 2).

7. Meta’s Llama 4 is generally available on Vertex AI.

8. Ai2 announced a partnership with Google Cloud to make its portfolio of open models available in Vertex AI Model Garden.

Together, this makes Vertex AI the only hyperscaler platform with generative media models across video, image, speech, and music.

To build on your AI agent initiatives, we announced new advancements in Vertex AI to help you better tune, deploy and manage the right model:

9. Vertex AI Dashboards: These help you monitor usage, throughput, latency, and troubleshoot errors, providing you with greater visibility and control.

10. Model Customization and Tuning: You can also manage custom training and tuning with your own data on top of foundational models in a secure manner across all first-party model families including Gemini, Imagen, Veo, embedding, and translation models, as well as open models like Gemma, Llama, and Mistral.

11. Vertex AI Model Optimizer: Automatically generate the highest quality response for each prompt based on your desired balance of quality and cost

12. Live API: Offers streaming audio and video directly into Gemini. Now your agents can process and respond to rich media in real time, opening new possibilities for immersive, multimodal applications.

13. Vertex AI Global Endpoint: Provides capacity-aware routing for our Gemini models across multiple regions, maintaining application responsiveness even during peak traffic or regional service fluctuations.

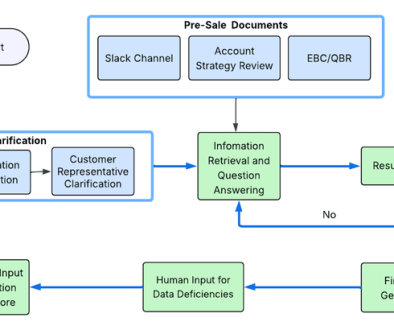

We also introduced new capabilities to help you build and manage multi-agent systems — regardless of which technology framework or model you’ve chosen.

14. Agent Development Kit (ADK): This open-source framework simplifies the process of building sophisticated multi-agent systems while maintaining precise control over agent behavior. Agent Development Kit supports the Model Context Protocol (MCP) which provides a unified way for AI models to access and interact with various data sources and tools, rather than requiring custom integrations for each.

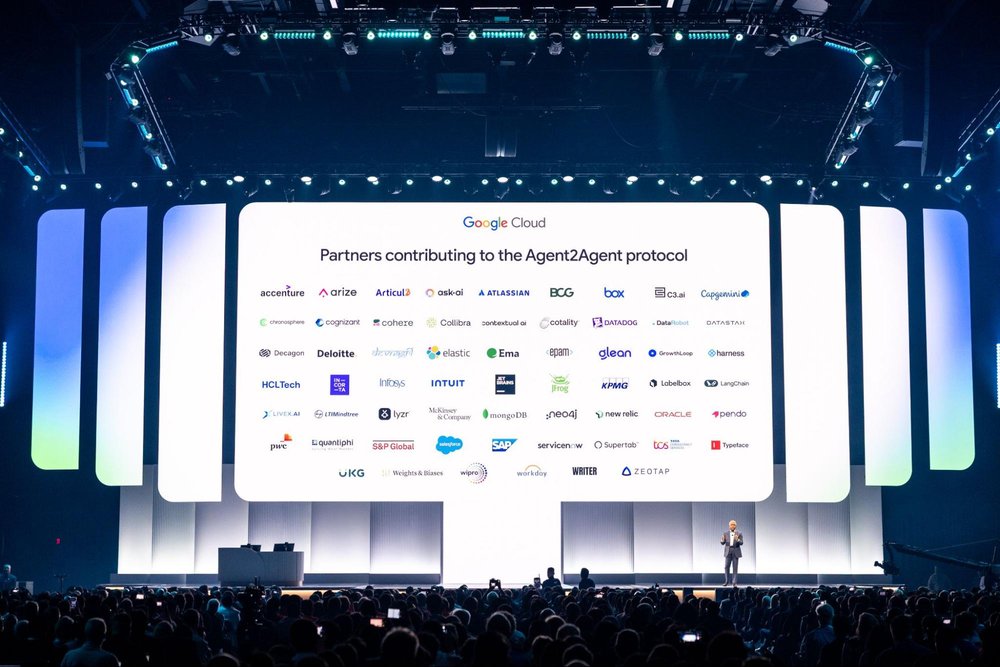

15. Agent2Agent (A2A) protocol: We’re proud to be the first hyperscaler to create an open Agent2Agent protocol to help enterprises support multi-agent ecosystems, so agents can communicate with each other, regardless of the underlying framework or model. More than 50 partners, including Accenture, Box, Deloitte, Salesforce, SAP, ServiceNow, and TCS are actively contributing to defining this protocol, representing a shared vision of multi-agent systems.

16. Agent Garden: This collection of ready-to-use samples and tools is directly accessible in ADK. Leverage pre-built agent patterns and components to accelerate your development process and learn from working examples.

17. Agent Engine: This fully managed agent runtime in Vertex AI helps you deploy your custom agents to production with built-in testing, release, and reliability at a global, secure scale.

18. Grounding with Google Maps1: For agents that rely on geospatial context, you can now ground your agents with Google Maps, so they can provide responses with geospatial information tied to places in the U.S.

19. Customer Engagement Suite: This latest version includes human-like voices; the ability to understand emotions so agents can adapt better during conversation; streaming video support so AI agents can interpret and respond to what they see in real-time through customer devices; and AI assistance to build agents in a no-code interface.

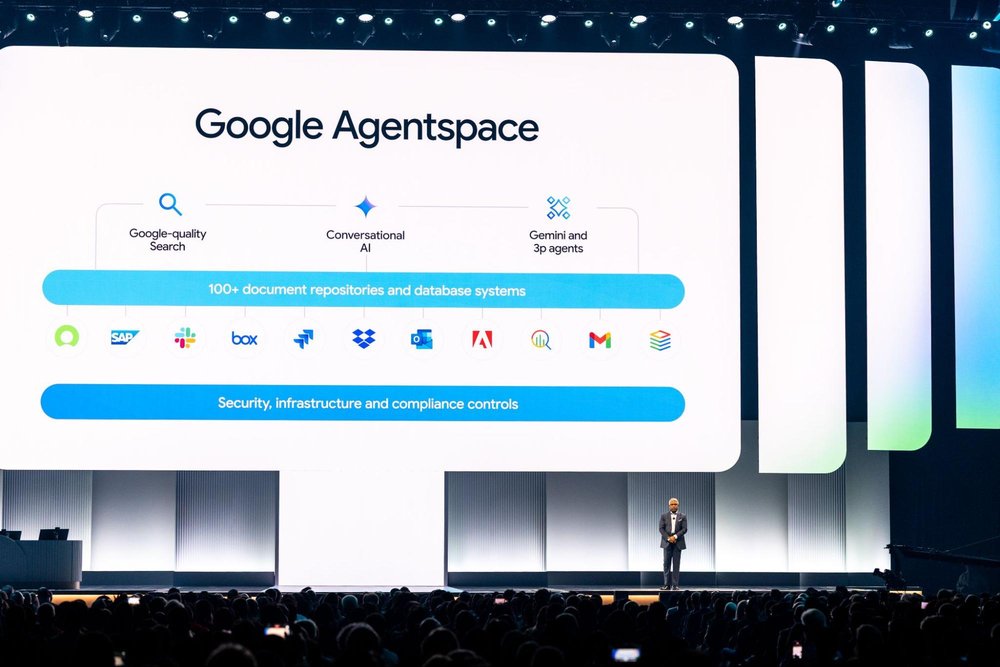

We announced exciting enhancements to Google Agentspace to help scale the adoption of enterprise search and AI agents across the enterprise. Agentspace puts the latest Google foundation models, Google-quality search, powerful AI agents, and actionable enterprise knowledge in the hands of every employee.

20. Integrated with Chrome Enterprise: Bringing Agentspace directly into Chrome helps employees easily and securely find information, including data and resources, right within their existing workflows.

21. Agent Gallery: This provides employees a single view of available agents across the enterprise, including those from Google, internal teams, and partners — making agents easy to discover and use.

22. Agent Designer: A no-code interface for creating custom agents that automate everyday work tasks or enhance knowledge. Agent Designer helps employees adapt agents to their individual workflows and needs, no matter their technical experience.

23. Idea Generation agent: Helps employees innovate by autonomously developing novel ideas in any domain, then evaluating them to find the best solutions via a competitive system inspired by the scientific method.

24. Deep Research agent: Explores complex topics on the employee’s behalf, synthesizing information across internal and external sources into comprehensive, easy-to-read reports — all with a single prompt.

We brought the best of Google DeepMind and Google Research together with new infrastructure and AI capabilities in Google Cloud, including:

25. AlphaFold 3: Developed by Google DeepMind and Isomorphic Labs, the new AlphaFold 3 High-Throughput Solution, available for non-commercial use and deployable via Google Cloud Cluster Toolkit, enables efficient batch processing of up to tens of thousands of protein sequences while minimizing cost through autoscaling infrastructure.

26. WeatherNext AI models: Google DeepMind and Google Research WeatherNext models enable fast, accurate weather forecasting, and are now available in Vertex AI Model Garden, allowing organizations to customize and deploy them for various research and industry applications.

AI infrastructure

We’re pleased to introduce new innovations throughout the AI Hypercomputer stack that collectively deliver more intelligence per dollar:

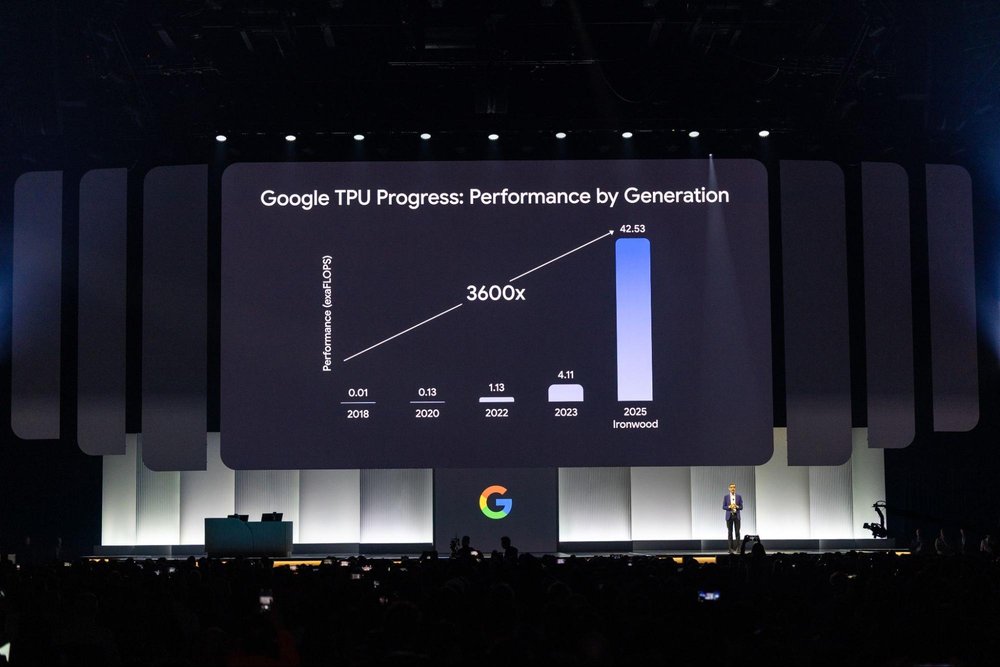

27. Ironwood: Our 7th generation TPU joins our AI-optimized hardware portfolio to power thinking, inferential AI models at scale (coming later in 2025). Read more here.

28. Google Distributed Cloud (GDC): We have partnered with NVIDIA to bring Gemini to NVIDIA Blackwell systems, with Dell as a key partner, so Gemini can be used locally in air-gapped and connected environments. Read more here.

29. Pathways on Cloud: Developed by Google DeepMind, Pathways is a distributed runtime that powers all of AI at Google, and is now available for the first time on Google Cloud.

30. vLLM on TPU: We’re bringing vLLM to TPUs to make it easy to run inference on TPUs. Customers who have optimized PyTorch with vLLM can how run inference on TPUs without changing their software stack, and also serve on both TPUs and GPUs if needed.

31. Dynamic Workload Scheduler resource management and job scheduling platform now features support for Trillium, TPU v5e, A4 (NVIDIA B200), and A3 Ultra (NVIDIA H200) VMs in preview via Flex Start mode, with Calendar mode support for TPUs coming later this month.

32. A4 and A4X VMs: We’ve significantly enhanced our GPU portfolio with the availability of A4 and A4X VMs powered by NVIDIA’s B200 and GB200 Blackwell GPUs, respectively, and A4X VMs are now in preview. We were the first cloud provider to offer both of these options.

33. NVIDIA Vera Rubin GPUs: Google Cloud will be among the first to offer NVIDIA’s next-generation Vera Rubin GPUs, which offer up to 15 exaflops of FP4 inference performance per rack.

34. Cluster Director (formerly Hypercompute Cluster) lets you deploy and manage a group of accelerators as a single unit with physically colocated VMs, targeted workload placement, advanced cluster maintenance controls, and topology-aware scheduling. New updates coming later this year include Cluster Director for Slurm, 3600 observability features, and job continuity capabilities. Register to join the preview.

Application Development

Developing on top of Google Cloud, and with Google Cloud tools, gets better every day.

35. The new Application Design Center, now in preview, provides a visual, canvas-style approach to designing and modifying application templates, and lets you configure application templates for deployment, view infrastructure as code in-line, and collaborate with teammates on designs.

36. The new Cloud Hub service, in preview, is the central command center for your entire application landscape, providing insights into deployments, health and troubleshooting, resource optimization, maintenance, quotas and reservations, and support cases. Try Cloud Hub here.

37. App Hub, which models your applications as interconnected services and workloads for an application-centric view, is now integrated with over 20 Google Cloud products.

38. Application Monitoring, in public preview, supports automatically tagging telemetry (logs, metrics, and traces) with application context, application-aware alerts, and out-of-the-box application dashboards.

39. Cost Explorer, in private preview, provides visibility into granular application costs and utilization metrics, allowing you to identify efficiency opportunities; sign up here to try it out.

40. Gemini Code Assist agents can help with common developer tasks such as code migration, new feature implementation, code review, test generation, model testing, and documentation, and their progress can be tracked on the new Gemini Code Assist Kanban board.

41. Gemini Code Assist is now available in Android Studio for professional developers who want AI coding assistance with enterprise security and privacy features.

42. Gemini Code Assist tools, now in preview, helps you access information from Google apps and tools from partners including Atlassian, Sentry, Snyk, and more.

43. An App Prototyping agent in preview for Gemini Code Assist within the new Firebase Studio development environment turns your app ideas into fully functional prototypes, including the UI, backend code, and AI flows.

44. Gemini Cloud Assist is integrated with Application Design Center in preview to accelerate application infrastructure design and deployment.

45. Gemini Cloud Assist Investigations leverages data in your cloud environment to accelerate troubleshooting and issue resolution. Register for the private preview here.

46. Gemini Cloud Assist is now integrated across Google Cloud services including Storage Insights, Cloud Observability, Firebase, Database Center, Flow Analyzer, FinOps Hub, as well as security- and compliance-related services.

47. FinOps Hub 2.0 now includes waste insights and cost optimization opportunities from Gemini Cloud Assist.

48. The new Enterprise tier of the Google Developer Program is in limited preview, providing a safe and affordable way to explore Google Cloud and its AI products for a set monthly cost of $75/month per seat. Learn more here.

Compute

Whatever your workload, there’s a Compute Engine virtual machine to help you run it at the price, performance and reliability levels you need.

49. New C4D VMs built on AMD’s 5th Gen EPYC processors and paired with Google Titanium deliver impressive performance gains over prior generations— up to 30% vs C3D on the estimated SPECrate®2017_int_base benchmark. Currently in preview, try out C4D today.

50. C4 VMs built on the 6th generation Intel Granite Rapids CPUs feature the highest frequency of any Compute Engine VM — up to 4.2 GHz.

51. C4 shapes with Titanium Local SSD offer improved performance for I/O-intensive workloads like databases and caching layers, achieving Local SSD latency reductions of up to 35%.

52. C4 bare metal instances provide performance gains of up to 35% for general compute and up to 65% for ML recommendation workloads compared to the prior generation.

53. New, larger C4 VM shapes scale up to 288 vCPU, with 2.2TB of high-performing DDR5 memory and larger cache sizes. Request preview access here.

Compute Engine also features a variety of specialized VM families and unique capabilities:

54. New H4D VMs for demanding HPC workloads are built on the 5th gen AMD EPYC CPUs, and offer the highest whole-node VM performance of more than 12,000 flops, the highest per-core performance, and the best memory bandwidth of more than 950 GB/s of our VM families. Sign up for the H4D preview.

55. M4 VMs are certified for business-critical, in-memory SAP HANA workloads ranging from 744GB to 3TB, and for SAP NetWeaver Application Server, and offer up to 65% better price-performance and 2.25x more SAP Application Performance Standard (SAPS) compared to the previous memory-optimized M3.

56. The Z3 storage-optimized family now features new Titanium SSDs and offers nine new smaller shapes, ranging from 3TB to 18TB per instance. The Z3 family also introducing new storage-optimized bare-metal instance which include up to 72TB of Titanium SSDs and direct access to the physical server CPUs. Now in preview, register your interest here.

57. Nutanix Cloud Clusters (NC2) on Google Cloud let you run, manage, and operate apps, data, and AI across private and public clouds. Sign up for the public preview here.

58. Google Cloud VMware Engine now comes in 18 additional node shapes, bringing the total number of node shapes across VMware Engine v1 and v2 to 26.

59. Within the Titanium family, Titanium ML Adapter securely integrates NVIDIA ConnectX-7 network interface cards (NICs), providing 3.2 Tbps of non-blocking GPU-to-GPU bandwidth.

60. Titanium offload processors now integrate our GPU clusters with the Jupiter data center fabric, for greater cluster scale.

61. You can now manage managed instance groups (MIGs) as a single entity.

62. MIGs now support committed use discounts (CUDs) and reservation sharing with Vertex AI and Autopilot.

Containers & Kubernetes

The case for running on Google Kubernetes Engine (GKE) keeps on getting stronger, across an ever expanding class of workloads, most recently — AI.

63. GKE Inference Gateway offers intelligent scaling and load-balancing capabilities, helping you handle request scheduling and routing with gen AI model-aware scaling and load-balancing techniques.

64. With GKE Inference Quickstart, you can choose an AI model and your desired performance, and GKE configures the right infrastructure, accelerators, and Kubernetes resources to match.

65. In partnership with Anyscale, we announced RayTurbo on GKE, an optimized version of open-source Ray that delivers 4.5x faster data processing and requires 50% fewer nodes for serving.

66. Cluster Director for GKE (formerly Hypercompute Cluster) is now generally available, letting you deploy and manage large clusters of accelerated VMs with compute, storage, and networking — all operating as a single unit.

67. We announced performance improvements to GKE Autopilot, including faster pod scheduling, scaling reaction time, and capacity right-sizing.

68. Starting in Q3, Autopilot’s container-optimized compute platform will also be available to standard GKE clusters, without requiring a specific cluster configuration.

Customers

We shared hundreds of new customer stories across every industry and region, highlighting the ways they’re using Google Cloud to drive real impact. Here are some highlights:

69. Agoda, one of the world’s largest digital travel platforms, creates unique visuals and videos of travel destinations with Imagen and Veo on Vertex AI.

70. Bayer built an agent that uses predictive AI and advanced analytics to predict flu trends.

71. Bending Spoons integrated Imagen 3 into its Remini app to launch a popular new AI filter, processing an astounding 60 million photos per day.

72. Bloomberg Connects is using Gemini to explore new ways to help museums and other cultural institutions make their digital content accessible to more visitors.

73. Citi is using Vertex AI to rapidly deploy generative AI-powered productivity tools to more than 150,000 employees.

74. DBS, a leading Asian financial services group, is using Customer Engagement Suite to reduce customer call handling times by 20%.

75. Deutsche Bank built DB Lumina, a new Gemini-powered tool that can synthesize financial data and research, turning, for example, a report that’s hundreds of pages into a one-page brief, delivering it in a matter of seconds to traders and wealth managers.

76. Deutsche Telekom has announced an expanded strategic partnership with Google Cloud, focusing on cloud and AI integration to modernize Deutsche Telekom’s IT, networks, and business applications, including migrating its SAP landscape.

77. Dun & Bradstreet is using Security Command Center to centralize monitoring of AI security threats.

78. Fanatics is partnering with Google Cloud to use AI technology to enhance every aspect of the fan journey. With Vertex AI Search for Commerce, Fanatics has developed an intelligent search ecosystem that understands and anticipates fan preferences, improves quality assurance and delivers intelligent customer service, and more.

79. Freshfields is using Gemini for Google Workspace and Google Cloud’s Vertex AI to enhance client services, including powering Freshfields’ Dynamic Due Diligence solution.

80. Globo, Latin America’s largest media company, used Vertex AI Search to create a recommendations experience inside its streaming platform that more than doubled their click-through-play rate on videos.

81. Gordon Food Services is simplifying insight discovery and recommending next steps with Agentspace.

82. The Home Depot built Magic Apron, an agent that offers expert guidance 24/7, providing detailed how-to instructions, product recommendations, and review summaries to make home improvement easier.

83. Honeywell has incorporated Gemini into its product development.

84. KPMG is building Google AI into in its newly formed KPMG Law firm and implementing Agentspace to enhance its own workplace operations.

85. L’Oreal is using Gemini, Imagen and Veo to accelerate creative ideation and production for marketing and product design, significantly speeding up workflows while maintaining ethical standards.

86. Lloyds Banking Group has taken a significant step in its strategic transformation by migrating its major platforms to Google Cloud. The transition is unlocking new opportunities to innovate with AI, enhancing the customer experience.

87. Lowe’s is revolutionizing product discovery with Vertex AI Search to generate dynamic product recommendations and address customers’ complex search queries.

88. Nevada Department of Employment, Training and Rehabilitation developed an Appeals AI Assistant powered by BigQuery and Vertex AI that synthesizes case data to help Appeals Referees make fair benefit approvals 4x faster.

89. Nokia built a coding tool to speed up app development with Gemini, enabling developers to create 5G applications faster.

90. Nuro, an autonomous driving company, uses vector search in AlloyDB to identify challenging scenarios on the road.

91. Mercado Libre deployed Vertex AI Search across 150M items in 3 pilot countries that is helping their 100M customers find the products they love faster, already delivering millions of dollars in incremental revenue.

92. Papa Johns is using AI to transform the ordering and delivery experience for its global customers. With Google Cloud’s AI, data analytics, and machine learning capabilities, Papa Johns can anticipate customer needs and personalize their pizza experience, as well as provide a consistent customer experience both inside the restaurants and online.

93. Reddit is using Gemini on Vertex AI to power “Reddit Answers,” Reddit’s AI-powered conversation platform. Additionally, Reddit is using Enterprise Search to improve its homepage experience.

94. Samsung is integrating Gemini on Google Cloud into Ballie, its newest AI home companion robot, enabling more personalized and intelligent interactions for users.

95. Seattle Children’s hospital is launching Pathway Assistant, a gen AI-powered agent with Gemini that improves clinicians’ access to complex information and the latest evidence-based best practices needed to treat patients.

96. Government of Singapore uses Google Cloud Web Risk to protect their residents online.

97. The Wizard of Oz at The Sphere is an immersive experience that reconceptualizes the 1939 film classic through the magic of AI, bringing it to life on a whole new scale for the colossal 160,000-square-foot domed screen at The Sphere in Las Vegas. It’s a collaboration between Sphere Entertainment, Google DeepMind, Google Cloud, Hollywood production company Magnopus, and five others.

98. Spotify uses BigQuery to harness enormous amounts of data to deliver personalized experiences to over 675 million users worldwide.

99. Intuit is using Google Cloud’s Document AI and Gemini models to simplify tax preparation for millions of TurboTax consumers this tax season, ultimately saving time and reducing errors.

100. United Wholesale Mortgage is using Google Cloud’s gen AI and data analytics to improve the mortgage process for 50,000 mortgage brokers and their clients, focusing on speed, efficiency, and personalized service.

101. Verizon is using Google Cloud’s Customer Engagement Suite to enhance its customer service for more than 115 million connections with AI-powered tools, like the Personal Research Assistant.

102. Vodafone used Vertex AI along with open-source tools and Google Cloud’s security foundation to establish an AI security governance layer.

103. Wayfair updates product attributes 5x faster with Vertex AI.

104. WPP built Open as a platform powered by Google models that all of its employees worldwide can use to concept, produce, and measure campaigns.

For more customer stories, check out our latest blog with 601 real AI use cases.

Databases

105. You can now search structured data in AlloyDB with Google Agentspace.

106. The next-generation of AlloyDB natural language lets you query structured data in AlloyDB securely and accurately, enabling natural language text modality in apps.

107. In AlloyDB, we’ve optimized SQL functionality spanning vector search and structured filters and joins, enabling faster vector search using the Scalable Nearest Neighbor (ScaNN) for AlloyDB index.

108. AlloyDB AI includes three new AI models: one that improves the relevance of vector search results using cross attention reranking; a multimodal embeddings model that supports text, images, and videos, and a new Gemini Embedding text model.

109. The new AlloyDB AI query engine lets developers use natural language expressions and constructs within SQL queries. Sign up for the preview of these AlloyDB features here.

110. MCP Toolbox for Databases (formerly Gen AI Toolbox for Databases) now supports Model Context Protocol (MCP) enabling seamless connections between AI agents and enterprise databases.

111. Firestore with MongoDB compatibility, in preview, lets developers take advantage of MongoDB’s API portability along with Firestore’s multi-region replication with strong consistency, virtually unlimited scalability, a 99.999% SLA, and single-digit milliseconds read latency. Get started here today.

112. The new Oracle Base Database Service offers a flexible and controllable way to run Oracle Databases in the cloud.

113. Oracle Exadata X11M is now GA, bringing the Oracle Exadata platform to Google Cloud and adding additional enterprise-ready capabilities, including customer managed encryption keys (CMEK).

114. Database Migration Service (DMS) now supports SQL Server to PostgreSQL migrations for Cloud SQL and AlloyDB, allowing you to fully execute on your database modernization strategy.

115. Cloud SQL and AlloyDB are available on C4A instances, our Arm-based Google Axion Processors delivering higher price-performace and throughput. Learn more here.

116. Database Center is now generally available and supports every database in our portfolio, providing a unified, AI-powered fleet management solution.

117. Spanner vector search is now generally available, designed to work with our SQL, Graph, Key-Value, and Full-Text Search modalities.

118. Graph Visualization for Spanner is now generally available, allowing users to visually explore valuable information from graph data.

119. Repeatable Read isolation (in preview), improved performance, and low-touch migration tooling to simplify moving workloads from MySQL to Spanner.

120. Aiven for AlloyDB Omni, a fully-managed AlloyDB Omni service from our partner Aiven that runs on AWS, Azure, and Google Cloud, is now generally available.

121. Bigtable continuous materialized views, in preview, simplifies real-time updates for applications that rely on immediate reporting and insights.

122. New Cassandra-compatible APIs and live-migration tooling for zero-downtime migrations from Cassandra to Bigtable and Spanner.

123. Memorystore for Valkey is now generally available, with support for 7.2 and 8.0 engine versions.

124. Firebase Data Connect is now GA, offering the reliability of Cloud SQL for PostgreSQL with instant GraphQL APIs and type-safe SDKs

Data analytics

We announced several new innovations with our autonomous data to AI platform powered by BigQuery, alongside our unified, trusted, and conversational Looker BI platform:

125. BigQuery pipelines, now GA, supports building data pipelines.

126. BigQuery data preparation, now GA, transforms and enriches data.

127. BigQuery anomaly detection, now in preview, maintains data quality and automates metadata generation.

128. Data science agent, now GA, is embedded within Google’s Colab notebook, provides intelligent model selection, enabling scalable training, and faster iteration.

129. Looker conversational analytics, in preview, lets business users interact with data using natural language.

130. The Looker Conversational Analytics API, now in preview, lets developers build and embed conversational analytics into applications and workflows. Sign up to gain access.

131. BigQuery knowledge engine, in preview, leverages Gemini to analyze schema relationships, table descriptions, and query histories to generate metadata on the fly, model data relationships, and recommend business glossary terms.

132. BigQuery semantic search, is now GA, providing AI-powered data insights and across BigQuery, grounding AI and agents in business context.

133. BigQuery’s contribution analysis feature, now GA, helps you pinpoint the key factors (or combinations of factors) responsible for the most significant changes in a metric.

134. We added Gemini in BigQuery features into existing BigQuery pricing models across all BigQuery compute pricing options. Express your interest in trying out the new features.

135. BigQuery pipe syntax is GA, letting you apply operators in any order and as often as you need, and is compatible with most standard SQL operators.

Then, for data science and analyst teams, we added AI-driven data science and workflows as part of BigQuery notebook:

136. New intelligent SQL cells understand your data’s context and provide smart suggestions as you write code, and let you join data sources directly within your notebook.

137. Native exploratory analysis and visualization capabilities in BigQuery make it easy to explore data, as well as add features to enable easier collaboration with colleagues. Data scientists can also schedule analyses to run and refresh insights periodically.

138. The new BigQuery AI query engine lets data scientists process structured and unstructured data together with added real-world context, co-processing traditional SQL alongside Gemini to inject runtime access to real-world knowledge, linguistic understanding, and reasoning abilities.

139. Google Cloud for Apache Kafka, now GA, facilitates real-time data pipelines for event sourcing, model scoring, messaging and real-time analytics.

140. Apache Spark workloads within BigQuery, now in preview, execute serverlessly, that is, on a fully managed platform.

141. New dataset-level insights in BigQuery data canvas, in preview, surface hidden relationships between tables and generate cross-table queries by integrating query usage analysis and metadata.

142. BigQuery ML includes the new AI.GENERATE_TABLE in preview to capture the output of LLM inference within SQL clauses.

143. BigQuery ML now supports open-source and Anthropic’s Claude, Llama, and Mistral models, hosted on Vertex AI.

144. BigQuery vector search includes a new index type, now GA, based on Google’s ScaNN model that’s coupled with a CPU-optimized distance computation algorithm for scalable, faster and more cost-efficient processing.

145. The preview of BigQuery ML’s pre-trained TimesFM model developed by Google Research simplifies time-series forecasting.

146. We integrated new Google Maps Platform datasets directly into BigQuery, to make it easier for data analysts and decision makers to access insights.

147. In addition, Earth Engine in BigQuery brings the best of Earth Engine’s geospatial raster data analytics directly into BigQuery. Learn more here.

148. GrowthLoop introduced its Compound Marketing Engine built on BigQuery with Growth Agents powered by Gemini, so marketing can build personalized audiences and journeys that drive rapidly compounding growth.

149. Informatica expanded its services on Google Cloud to enable sophisticated analytical and AI governance use cases.

150. Fivetran introduced its Managed Data Lake Service for Cloud Storage with native integration with BigQuery metastore and automatic data conversion to open table formats like Apache Iceberg and Delta Lake

151. DBT is now integrated with BigQuery DataFrames and DBT Cloud is now on Google Cloud.

152. Datadog introduced expanded monitoring capabilities for BigQuery, providing granular visibility into query performance, usage attribution, and data quality metrics.

BigQuery’s autonomous data foundation provides governance, orchestration for diverse data workloads, and a commitment to flexibility via open formats. Announcements in this area include:

153. BigQuery makes unstructured data a first-class citizen with multimodal tables in preview, bringing rich, complex data types alongside structured data for unified storage and querying via the new ObjectRef data type.

154. BigQuery governance in preview provides a single, unified view for data stewards and professionals to handle discovery, classification, curation, quality, usage, and sharing.

155. The new BigQuery universal catalog brings together a data catalog (formerly known as Dataplex Catalog) and a fully managed, serverless metastore, now generally available.

156. BigQuery metastore, now GA, enable engine interoperability across BigQuery, Apache Spark, and Apache Flink engines, with support for the Iceberg Catalog.

157. BigQuery business glossary, now GA, lets you define and administer company terms, identify data stewards for these terms, and attach them to data asset fields.

158. BigQuery continuous queries, now GA, enable instant analysis and actions on streaming data using SQL, regardless of its original format.

159. BigQuery tables for Apache Iceberg in preview, lets you connect your Iceberg data to SQL, Spark, AI and third-party engines.

160. New advanced workload management capabilities, now GA, scale resources, manage workloads, and help ensure their cost-effectiveness.

161. BigQuery spend commit, now GA, simplifies purchasing, unifying spend across BigQuery data processing engines, streaming, governance, and more.

162. BigQuery DataFrames now has AI code assist capabilities in preview, letting you use natural language prompts to generate or suggest code in SQL or Python, or to explain an existing SQL query.

163. SQL translation assistance, now GA, is an AI-based translator that lets you create Gemini-enhanced rules to customize your SQL translations, to accelerate BigQuery migrations.

164. Catalog metadata export, GA, enables bulk extract of catalog entries into Cloud Storage.

165. BigQuery can now perform automatic at-scale cataloging of BigLake and object tables, now GA.

166. BigQuery managed disaster recovery is now GA, featuring automatic failover coordination, continuous near-real-time data replication to a secondary region, and fast, transparent recovery during outages.

167. New workload management capabilities in preview include reservation-level fair sharing of slots, predictability in performance of reservations, and enhanced observability through reservation attribution in billing.

Looker, is adding a host of new conversational and visual capabilities, aimed at making BI accessible and useful to all users, accelerated by AI.

168. Gemini in Looker features are now available to all Looker platform users, including Conversational Analytics, Visualization Assistant, Formula Assistant, Automated Slide Generation, and LookML Code Assistant.

169. Code Interpreter for Conversational Analytics is in preview, allowing business users to perform forecasting and anomaly detection using natural language without needing deep Python expertise. Learn more and sign up for it here.

170. New Looker reports feature an intuitive drag-and-drop interface, granular design controls, a rich library of visualizations and templates, and real-time collaboration capabilities, now in the core Looker platform.

171. With Google Cloud’s acquisition of Spectacles.dev, developers can automate testing and validation of SQL and LookML changes using CI/CD practices.

Firebase

172. The new Firebase Studio, available to everyone in preview, is a cloud-based, agentic development environment powered by Gemini that includes everything developers need to create and publish production-quality full-stack AI apps quickly, all in one place. Gemini Code Assist agents are available via private preview.

173. Genkit, an open-souce framework for building AI-powered applications, using your preferred language, now has early support for Python and expanded support for Go. Try this template in Firebase Studio to build with Genkit.

174. Vertex AI in Firebase now includes support for the Live API for Gemini models, enabling more conversational interactions in apps such as allowing customers to ask audio questions and get responses.

175. Firebase Data Connect is now GA, offering the reliability of Cloud SQL for PostgreSQL with instant GraphQL APIs and type-safe SDKs.

176. Firebase App Hosting is also GA, providing an opinionated, git-centric hosting solution for modern, full-stack web apps.

177. A new App Testing agent within Firebase App Distribution, also in preview, prepares mobile apps for production by generating, managing, and executing end-to-end tests.

Google Cloud Consulting

Google Cloud Consulting introduced several new pre-packaged service offerings:

178. Agentspace Accelerator provides a structured approach to connecting and deploying AI-powered search within organizations, so employees can easily gain access to relevant internal information and resources when they need it.

179. Optimize with TPUs helps customers migrate workloads to our purpose-built AI chips, TPUs.

180. Oracle on Google Cloud lets customers combine Oracle databases and applications with Google Cloud’s advanced platform and AI capabilities for enhanced database and network performance.

181. We expanded access to Delivery Navigator, a series of proven delivery methodologies and best practices to help with migrations and technology implementations to customers as well as partners, in preview.

Networking

Google’s global network is the backbone of Google Cloud’s services, and relies on a long list of innovations.

182. Cloud WAN, a Cross-Cloud Network solution, is a fully managed, reliable, and secure enterprise backbone that makes Google’s global private network available to all Google Cloud customers. Cloud WAN delivers up to 40% improved network performance, while reducing total cost of ownership by up to 40%. Read more here.

183. The new 400G Cloud Interconnect and Cross-Cloud Interconnect, available later this year, offers up to 4x more bandwidth than our 100G Cloud Interconnect and Cross-Cloud Interconnect, providing connectivity from on-premises or other cloud environments to Google Cloud.

184. Build massive AI services with networking support for up to 30,000 GPUs per cluster in a non-blocking configuration, available in preview now.

185. Zero-Trust RDMA security helps you secure your high-performance GPU and TPU traffic with our RDMA firewall, featuring dynamic enforcement policies. Available later this year.

186. Get accelerated GPU-to-GPU communication, with up to 3.2Tbps of non-blocking GPU-to-GPU bandwidth with our high-throughput, low-latency RDMA networking, now generally available.

187. Leverage GKE Inference Gateway and Cloud Load Balancing alongside Model Armor, NVIDIA NeMo Guardrails, and Palo Alto Networks AI Runtime Security, all using Service Extensions.

188. Cloud Load Balancing has optimizations for LLM inference, letting you leverage NVIDIA GPU capacity across multiple cloud providers or on-prem infrastructure.

189. New Service Extensions plugins, powered by WebAssembly (Wasm), let you automate, extend, and customize your applications with plugin examples in Rust, C++, and Go. Support for Cloud Load Balancing is now generally available, and Cloud CDN support will follow later this year.

190. Cloud CDN‘s fast cache invalidation delivers static and dynamic content at global scale with improved performance, now in preview.

191. TLS 1.3 0-RTT in Cloud CDN boosts application performance for resumed connections, now in preview.

192. App Hub provides streamlined service discovery and management by automating service discovery and cataloging.

193. App Hub service health enables resilient global services with network-driven cross-regional failover. Available later this year.

194. Later in 2025, you’ll be able to use Private Service Connect to publish multiple services within a single GKE cluster, making them natively accessible from non-peered GKE clusters, Cloud Run, or Service Mesh.

Then, to help you secure your workloads, we introduced enhancements to protect distributed applications and internet-facing services against network attacks:

195. The new DNS Armor detects DNS-based data exfiltration attacks performed using DNS tunneling, domain generation algorithms (DGA) and other sophisticated techniques. Available in preview later this year.

196. New hierarchical policies for Cloud Armor let you enforce granular protection of your network architecture.

197. There are new network types and firewall tags for Cloud NGFW hierarchical firewall policies, coming this quarter in preview.

198. Cloud NGFW adds new layer 7 domain filtering, allowing firewall administrators to monitor and control outbound web traffic to only allowed destinations. Coming later in 2025.

199. Inline network DLP for Secure Web Proxy and Application Load Balancer provides real-time protection for sensitive data-in-transit via integration with third-party (Symantec DLP) solutions using Service Extensions. In preview this quarter.

200. Network Security Integration, now generally available, helps you maintain consistent policies across hybrid and multi-cloud environments without changing your routing policies or network architecture.

201. Imperva Application Security is integrated with Cloud Load Balancing, via Service Extensions, and is now available in the Google Cloud Marketplace.

Additional partner announcements

We’ve always taken an open approach to AI, and the same is true for agentic AI. With updates this week at Next ‘25, we’re now infusing partners at every layer of our agentic AI stack to enable multi-agent ecosystems. Here’s a closer look:

202. Expert AI services: Our ecosystem of services partners — including Accenture, BCG, Capgemini, Cognizant, Deloitte, HCLTech, Infosys, KPMG, McKinsey, PwC, TCS, and Wipro — have actively contributed to the A2A protocol and will support its implementation.

203. AI Agent Marketplace: We launched a new AI Agent Marketplace — a dedicated section within Google Cloud Marketplace that allows customers to browse, purchase, and manage AI agents built by partners including Accenture, BigCommerce, Deloitte Elastic, UiPath, Typeface, and VMware, with more launching soon.

204. Power agents with all your enterprise data: We are partnering with NetApp, Oracle, SAP, Salesforce, and ServiceNow to allow agents to access data stored in these popular platforms.

205. Better field alignment and co-sell: We introduced new processes to better capture and share partners’ critical contributions with our sales team, including increased visibility into co-selling activities like workshops, assessments, and proofs-of-concept, as well as partner-delivered services.

206. More partner earnings: We are evolving incentives to help partners capitalize on the biggest opportunities, such as a 2x increase in partner funding for AI opportunities over the past year. We also introduced new AI-powered capabilities in Earnings Hub, our destination for tracking incentives and growth.

207. We partnered with Adobe, the leader in creativity, to bring our advanced Imagen 3 and Veo 2 models to applications like Adobe Express.

208. Together with Salesforce’s Agentforce, we’re leading the digital labor revolution, driving massive gains in human augmentation, productivity, efficiency, and customer success.

Security

We offer critical cyber defense capabilities for today’s challenging threat environment, and introduced a number of new innovations:

209. Google Unified Security: This solution brings together our visibility, threat detection, AI powered security operations, continuous virtual red-teaming, the most trusted enterprise browser, and Mandiant expertise — in one converged security solution running on a planet-scale data fabric.

210. Alert triage agent: This agent performs dynamic investigations on behalf of users. It analyzes the context of each alert, gathers relevant information, and renders a verdict on the alert, along with a history of the agent’s evidence and decision making.

211. Malware analysis agent: This agent investigates whether code is safe or harmful. It builds on Code Insight to analyze potentially malicious code, including the ability to create and execute scripts for deobfuscation.

212. In Google Security Operations, new data pipeline management capabilities can help customers better manage scale, reduce costs, and satisfy compliance mandates.

213. We also expanded our Risk Protection Program, which provides discounted cyber-insurance coverage based on cloud security posture, to welcome new program partners Beazley and Chubb, two of the world’s largest cyber-insurers.

214. New employee phishing protections in Chrome Enterprise Premium use Google Safe Browsing data to help protect employees against lookalike sites and portals attempting to capture credentials.

215. The Mandiant Retainer provides on-demand access to Mandiant experts. Customers now can redeem prepaid funds for investigations, education, and intelligence to boost their expertise and resilience.

216. Mandiant Consulting is also partnering with Rubrik and Cohesity to create a solution to minimize downtime and recovery costs after a cyberattack.

Storage

Storage is a critical component for minimizing bottlenecks in both training and inference, and we introduced new innovations to help:

217. We expanded Hyperdisk Storage Pools to store up to 5 PiB of data in a single pool — a 5x increase from before.

218. Hyperdisk Exapools is the biggest and fastest block storage in any public cloud, with exabytes of storage delivering terabytes per second of performance.

219. Hyperdisk ML can now hydrate from Cloud Storage using GKE volume populator.

220. Rapid Storage is a new Cloud Storage zonal bucket with <1ms random read and write latency, and compared to other leading hyperscalers, 20x faster data access, 6 TB/s of throughput, and 5x lower latency for random reads and writes.

221. Anywhere Cache is a new strongly consistent cache that works seamlessly with existing regional buckets to cache data within a selected zone. Reduces latency up to 70% and 2.5TB/s accelerating AI workloads; maximizing goodput by keeping data close to GPU/TPUs.

222. The new Google Cloud Managed Lustre high-performance, fully managed parallel file system built on DDN EXAScaler. This zonal storage solution provides PB scale <1ms latency, millions of IOPS, and TB/s of throughput for AI workloads.

223. Storage Intelligence, the industry’s first offering enabling customers to generate storage insights specific to their environment by querying object metadata at scale, uses LLMs to provide insights into data estates, as well as take actions on them.

Startups

224. We announced a significant new partnership with the leading venture capital firm Lightspeed, which will make it easier for Lightspeed-backed startups to access technology and resources through the Google for Startups Cloud Program. This includes upwards of $150,000 in cloud credits for Lightspeed’s AI portfolio companies, on top of existing credits available to all qualified startups through the Google for Startups Cloud Program.

225. The new Startup Perks program provides early stage startups with preferred access to solutions from our partners like Datadog, Elastic, ElevenLabs, GitLab, MongoDB, NVIDIA, Weights & Biases, and more.

226. Google for Startups Cloud Program members will receive an additional $10,000 in credits to use exclusively on Partner Models through Vertex AI Model Garden, so they can quickly start using both Gemini models and models from partners like Anthropic and Meta.

Google Workspace: AI-powered productivity

Gemini not only powers best-in-class AI capabilities as a model, but through its own products, like Google Workspace, which includes popular apps like Gmail, Docs, Drive and Meet. We announced a number of new Workspace innovations to further empower users with AI, including:

227. Help me Analyze: This powerful feature transforms Google Sheets into your personal business analyst, intelligently identifying insights from your data without the need for explicit prompting, empowering you to make data-driven decisions with ease.

228. Docs Audio Overview: With audio overviews in Docs, you can create high-quality, human-like audio read-outs or podcast-style summaries of your documents.

229. Google Workspace Flows: Workspace Flows helps you automate daily work and repetitive tasks like managing approvals, researching customers, organizing your email, summarizing your daily agenda, and much more.

There’s no place like home

And with that, we’ve come to the end of Next 25. We hope you’ve enjoyed your time in Las Vegas, and wish you safe travels.

See you in Vegas next year for Google Cloud Next: April 22 – 24, 2026.

- aside_block

- <ListValue: [StructValue([(‘title’, ‘Turn your new insights from Google Cloud Next into action’), (‘body’, <wagtail.rich_text.RichText object at 0x3e62dd34bfa0>), (‘btn_text’, ”), (‘href’, ”), (‘image’, <GAEImage: next 25>)])]>

1. Grounding with Google Maps is currently available as an experimental release in the United States, providing access to only places data in the United States.

Read More for the details.