GCP – How Global Payments built a resilient architecture for scale with Cloud SQL

Editor’s note: When payments are your product, downtime isn’t an option. To meet the critical demands of global availability, Global Payments partnered with the Google Cloud product team to architect a resilient, multi-region solution leveraging Cloud SQL Enterprise Plus. This collaboration ensures enhanced uptime and streamlined disaster recovery. This strategic adoption of Google Cloud’s advanced database capabilities empowers Global Payments to deliver always-on performance. In this blog, Principal Architect Govindaraj Palanisamy shares how his team is using Cloud SQL to deliver always-on performance.

At Global Payments, we power payment services around the world, across every kind of industry—from schools and hospitals to stadiums and gas stations. Whether it’s a small daily purchase or a critical invoice, every transaction matters. When people go to pay their bills, buy a snack, or get through a turnstile, they expect things to just work—without delay. This commitment means powering seamless transactions, and our collaboration with Google Cloud on resilient architecture is key to delivering a superior customer experience and achieving scalability. That expectation becomes even more critical for our Tier 1 systems, like the portals that handle invoice and utility payments.These apps need to be up 24/7, with near-zero tolerance for downtime or data loss.

To meet those demands, we chose Cloud SQL Enterprise Plus edition, which provides the kind of performance, scalability, and business continuity we need for global operations. Today, we use Cloud SQL for multiple workloads, including managed Cloud SQL for SQL Server for high-priority transactions and Cloud SQL for PostgreSQL and Cloud SQL for MySQL for value-added services. And thanks to recent innovations from Google Cloud, we’re getting even more out of the platform.This comprehensive adoption across all three key database engines underscores our deep confidence in Cloud SQL’s capabilities and, by extension, in Google Cloud’s robust platform. And thanks to recent innovations from Google Cloud, we’re getting even more out of the platform.

- aside_block

- <ListValue: [StructValue([(‘title’, ‘Build smarter with Google Cloud databases!’), (‘body’, <wagtail.rich_text.RichText object at 0x7f027c382220>), (‘btn_text’, ”), (‘href’, ”), (‘image’, None)])]>

High expectations, high availability

For our Tier 1 workloads, we require 99.99% uptime, rapid failover, and recovery point objectives (RPO) of under a minute. These are systems that can’t afford to go down, even for maintenance. With Cloud SQL Enterprise Plus, we get:

-

Near-zero planned downtime (often under 1 second)

-

Less than a minute RTO and zero RPO using multi-zone HA configurations with synchronous replication

-

Easy disaster recovery orchestration and testing using advanced disaster recovery switchover and write endpoints

For less critical but still important workloads, like merchant-facing services, we use similar configurations with slightly more flexible recovery windows. But in all cases, we ensure that data protection, performance, and compliance requirements—including the Payment Card Industry Data Security Standard (PCI DSS), GDPR, and the NIST Cybersecurity Framework— remain paramount.

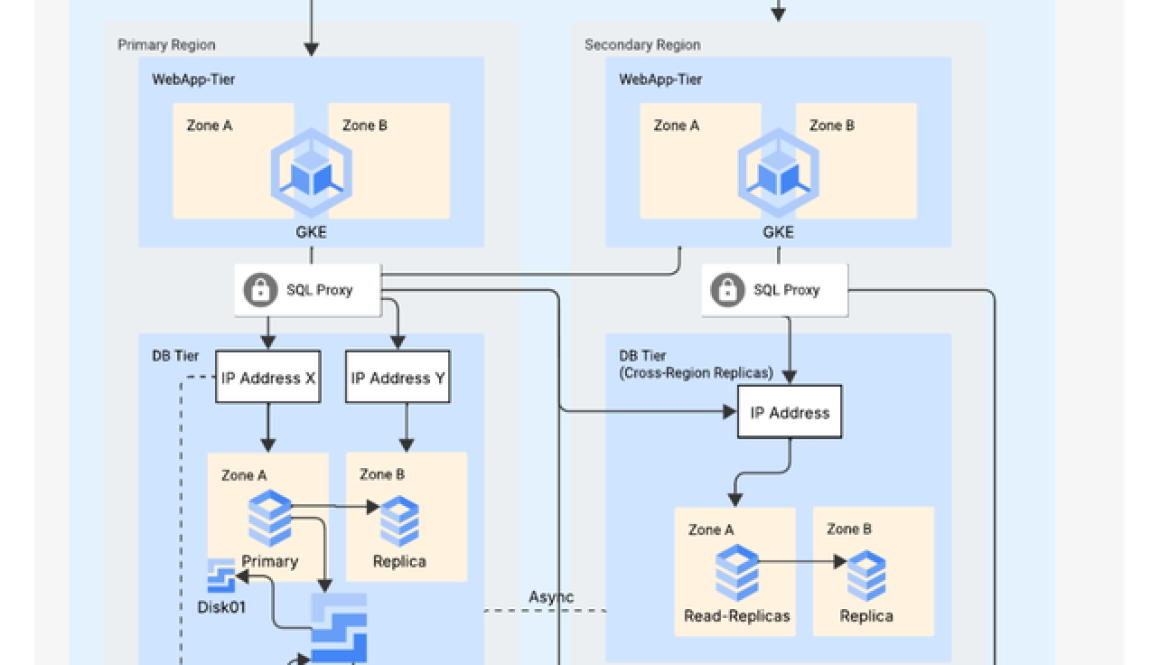

Architecture built for resilience

Our current deployment spans three Google Cloud regions, with each region running web and application tiers in Google Kubernetes Engine (GKE) and connecting to Cloud SQL through a Cloud SQL Auth Proxy. Every Cloud SQL database is replicated across zones and regions. Read replicas support low-latency reads, and cascading replication helps route read traffic away from write-heavy nodes to balance the load.

Fig. 1 – Global Payments’ Architecture Diagram featuring Cloud SQL & GKE

That distributed topology gives us options. With a three-region deployment, we’re able to keep a failover-ready region available—even in the rare event of a dual-zone failure—so we can shift traffic without disruption. If we anticipate traffic spikes—for example, during a seasonal billing surge—we can scale our read replicas to maintain consistent performance. And with point-in-time recovery and backup retention decoupled from the database instance, we’re covered even if an instance is deleted.

Uptime well spent

The results of our Cloud SQL deployment have been substantial:

-

Near-zero downtime during maintenance

-

Consistent performance with read/write separation

-

Streamlined disaster recovery testing (including quarterly failover drills driven by compliance requirements)

-

Up to 60% reduction in operational overhead

We also use query insights for automated alerting and performance monitoring, giving us better visibility into key metrics as we scale.

Cloud SQL’s managed services free up our team to focus on innovation, not on managing database infrastructure and with Cloud SQL Enterprise Plus, we have the performance and availability guarantees our clients expect. We’re already exploring new capabilities like managed connection pooling and read pools, which promise to simplify scaling and reduce latency even further. These are the kinds of enhancements that let us keep growing without outgrowing our infrastructure.

At Global Payments, reliability is business critical. Cloud SQL helps us deliver it.

Editor’s note: Govindaraj Palanisamy originally shared Global Payments’ story onstage at Google Cloud Next. During this session, Google Cloud also announced support for C4A virtual machines, powered by Google Axion, in Cloud SQL and AlloyDB. These VMs offer up to 65% better price-performance than current-generation x86 instances and up to 2x higher throughput than comparable Arm-based offerings. C4A is built to handle the scale, speed, and efficiency today’s enterprise databases demand.

Watch the full session or read the Axion announcement blog to learn more. And check out our web page to dive into the power of Cloud SQL.

Read More for the details.