GCP – The secret to document intelligence: Box builds Enhanced Extract Agents using Google’s Agent-2-Agent framework

Box is one of the original information sharing and collaboration platforms of the digital era. They’ve helped define how we work, and have continued to evolve those practices alongside successive waves of new technology. One of the most exciting advances of the generative AI era is that now, with all the data that Box users have stored, they can get considerably more value out of those files by using AI to search and synthesize their information in new ways.

That’s why Box created Box AI Agents, to intelligently discern and structure complex unstructured data. Today, we’re excited to announce the availability of the Box AI Enhanced Extract Agent. The Enhanced Extract Agent runs on Google’s most advanced Gemini 2.5 models, and they also feature Google’s Agent2Agent protocol, which allows secure connection and collaboration between AI agents across dozens of platforms in the A2A network.

The Box AI Enhanced Extract Agent gives enterprises users confidence in their AI, helping overcome any hesitations they might feel about gen AI technology and using it for business-critical tasks.

In this post, we’ll take a closer look at how our teams created the Box AI Enhanced Extract Agent and what others building new agentic AI systems might consider when developing their own solutions.

- aside_block

- <ListValue: [StructValue([(‘title’, ‘Try Google Cloud for free’), (‘body’, <wagtail.rich_text.RichText object at 0x3e58a1352760>), (‘btn_text’, ‘Get started for free’), (‘href’, ‘https://console.cloud.google.com/freetrial?redirectPath=/welcome’), (‘image’, None)])]>

Getting more content with confidence

When it comes to data extraction, simply pulling out text from documents is no longer sufficient. A core objective that businesses need peace of mind on is uncertainty estimation, which we define as understanding how uncertain the model is about particular extraction. This is paramount when an organization is processing vast quantities of documents — such as searching tens of thousands of items where you’re trying to extract all the relevant and related values in each of those items — and you need to guide human review effectively and with confidence. The goal isn’t just high accuracy, but also a reliable confidence score for each piece of extracted data.

With the Box AI Enhanced Extract Agent, we wanted to transform how businesses interact with their most complex content — whether that’s scanned PDFs, images, slides, and other diverse materials — and then turn it all into structured, actionable intelligence.

Box and Box AI are already available on Google Cloud Marketplace.

For instance, financial services organizations can automate loan application reviews by accurately extracting applicant details and income data; legal teams can accelerate discovery by pinpointing critical clauses in contracts; and HR departments can streamline onboarding by processing new hire paperwork automatically. In each of these cases, all extracted data like key dates and contractual terms can be validated by the crucial confidence scores that this Box and Google collaboration delivers. This confidence score helps ensure reliable, AI-vetted information powers efficient operations and proactive compliance without extensive manual effort.

Powering enhanced data extraction with Gemini 2.5 Pro

Box’s Enhanced Extract Agent leverages the sophisticated multimodal, agentic reasoning and capabilities of Google’s Gemini 2.5 Pro as its core intelligence engine. However, the relationship goes beyond simple API calls.

“Gemini 2.5 Pro is way ahead due to its multimodal, deep reasoning, and code generation capabilities in terms of accuracy compared to previous models for these complex extraction tasks,” Ben Kus, CTO at Box said. “These capabilities make Gemini a crucial component for achieving Box’s ambitious goals of turning unstructured content into structured content through enhanced extraction agents.”

To build robust confidence scores and enable deeper understanding, Box’s AI Agents acquire specific, granular information that the Gemini 2.5 Pro model is uniquely adept at providing.

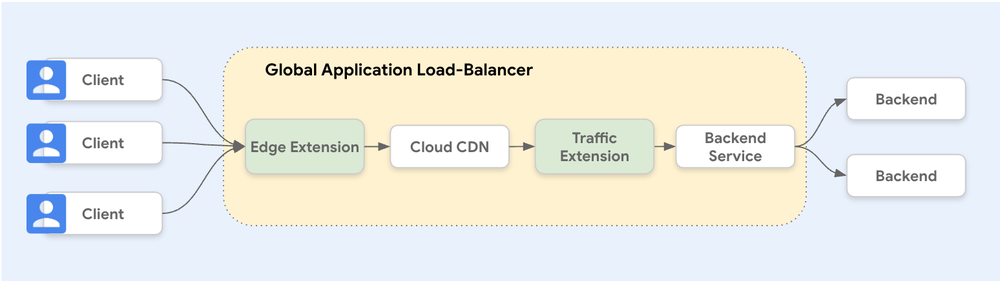

An agent-to-agent protocol for deeper collaboration

Box is championing an open AI ecosystem by embracing Google Cloud’s Agent2Agent protocol, enabling all Box AI Agents to securely collaborate with diverse external agents from dozens of partners (a list that keeps growing). By adopting the latest A2A specification, Box AI can ensure efficient and secure communication for complex, multi-system processes. This empowers organizations to power complex, cross-system workflows—bringing intelligence directly to where content lives, boosting productivity through seamless agent collaboration.This advanced interplay leverages the proposed agent-to-agent protocol in the following manners:

-

Box’s AI Agents: Orchestrate the overall extraction task, manages user interactions, applies business logic, and crucially, performs the confidence scoring and uncertainty analysis.

-

Google’s Gemini 2.5 Pro: Provides the core text comprehension, reasoning, and generation; and in this enhanced protocol, Gemini models also aim to furnish deeper operational data (like token likelihoods) to its counterpart.

This protocol, for example, allows Box’s Enhanced Extract Agent to “look under the hood” of Gemini 2.5 Pro to a greater extent than typical AI model integrations. This deeper insight is essential for:

-

Building Reliable Confidence Scores: Understanding how certain Gemini 2.5 Pro is about each generated token allows Box AI’s enhanced data extraction capabilities to construct more accurate and meaningful confidence metrics for the end-user.

-

Enhancing Robustness: Another key area of focus is model robustness ensuring consistent outputs. As Kus put it: “For us robustness is if you run the same model multiple times, how much variation we would see in the values. We want to reduce the variations to be minimal. And with Gemini, we can achieve this.”

Furthering this commitment to an open and extensible ecosystem, Box AI Agents will be published on Agentspace and will be able to interact with other agents using the A2A protocol. Box has also published support for the Google’s Agent Development Kit (ADK) so developers can build Box capabilities into their ADK agents, truly integrating Box intelligence across their enterprise applications.

The Google ADK, an open-source, code-first Python toolkit, empowers developers to build, evaluate, and deploy sophisticated AI agents with flexibility and control. To expand these capabilities, we have created the Box Agent for Google ADK , which allows developers to integrate Box’s Intelligent Content Management platform with agents built with Google ADK, enabling the creation of custom AI-powered solutions that enhance content workflows and automation.

This integration with ADK is particularly valuable for developers, as it allows them to harness the power of Box’s Intelligent Content Management capabilities using familiar software development tools and practices to craft sophisticated AI applications. Together, these tools provide a powerful, streamlined approach to build innovative AI solutions within the Box ecosystem.

Continual learning and human-in-the-loop, for the most flexible AI

The vision for enhanced extract includes a dynamic, self-improving system. “We want to implement that cycle so that you can get higher and higher confidence,” Kus, Box’s CTO, said. “This involves a human-in-the-loop process where low-confidence extractions are reviewed, and this feedback is used to refine the system.”

Here, the flexibility of Gemini 2.5 Pro, particularly concerning fine-tuning, enables continual improvement. Box is exploring advanced continual learning approaches, including:

-

In-context learning: Providing corrected examples within the prompt to Gemini 2.5 Pro.

-

Supervised fine-tuning: Google Cloud’s Vertex AI allows Box to store the fine-tuned weights in the company’s system and then just use them to run their fine-tuned model.

Box AI’s Enhanced Extract Agent would manage these fine-tuned adaptations (for example through small LoRA layers specific to a customer or document template) and provide them to the Gemini 2.5 Pro agent at inference time. “Gemini 2.5 Pro can be used to leverage these adaptations efficiently, using the context caching capability of Gemini models on Vertex AI to tailor its responses for specific, high-value extraction tasks using in-context learning. This allows for ‘true adaptive learning,’ where the system continuously improves based on user feedback and specific document nuances,” Kus said.

The future: Premium document intelligence powered by advanced AI collaboration

The Enhanced Extract Agent — underpinned by Gemini 2.5 Pro’s features such as multimodality, intelligent reasoning, planning and tool-calling, and large context windows — is envisioned as as a key differentiator that Box leverages in developing their AI Hub and Agent family. Box views the Enhanced Extract Agent as a fundamental way in which organizations can build more confidence in how they deploy AI in the enterprise.

For the Google team, it’s been exciting to see the production-grade, scalable use of our Gemini models by Box. Their solution not only provides extracted data, but meta-data semantics enabling a high degree of confidence and a system that uses the Box content and agents on top of Gemini models to enable the Enhanced Data Extraction Agent to adapt and learn over time.

The ongoing collaboration between Box and Google Cloud focuses on unlocking the full potential of models like Gemini 2.5 Pro for complex enterprise use cases, which are rapidly redefining the future of work and paving the way for the next generation of document intelligence powering the agentic workforce.

To reimagine your data, your assets, and your workplace, access Box and Box AI now in Google Cloud Marketplace.

Read More for the details.

![[2]](https://storage.googleapis.com/gweb-cloudblog-publish/original_images/2_BNE1Unf.gif)